A DevOps engineer recently decided to audit the configuration files hidden within their local directories, a routine habit for those who prefer knowing exactly what their machine is communicating with the outside world. While scanning through the filesystem, they encountered a long, cryptic string of characters that didn't belong to any known project configuration. Further investigation revealed that this string was not a random artifact of installation, but a permanent digital tag designed to track every interaction the user had with their tools. This discovery has sparked a conversation about the boundary between necessary system telemetry and invasive user surveillance in the era of AI agents.

The Mechanics of Permanent Identification

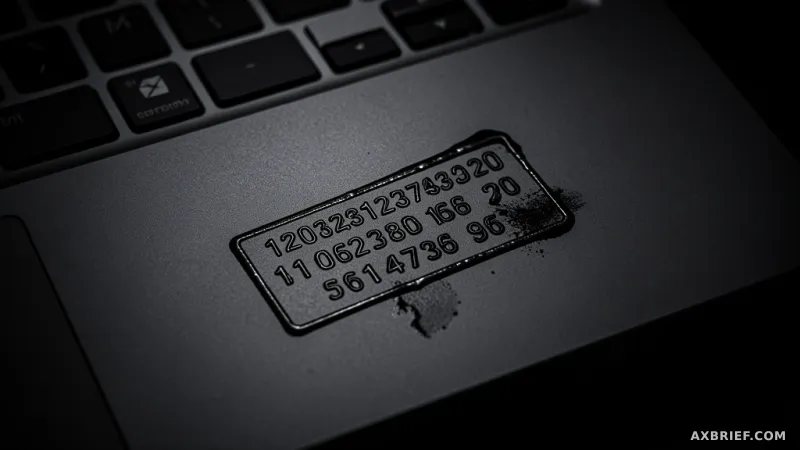

When a developer installs the Claude Code plugin from Vercel, the system automatically generates a Universally Unique Identifier, or UUID. In practical terms, this functions as a digital social security number for the specific hardware being used. This identifier is stored locally at the path `~/.claude/vercel-plugin-device-id`. Unlike session tokens or temporary cookies that expire after a set period, this ID is permanent. It possesses no expiration date and no scheduled renewal cycle, meaning once a device is tagged, it remains tagged indefinitely.

Every time the tool is invoked, the plugin initiates a data transfer to `telemetry.vercel.com`. The payload of this transmission is comprehensive, including the unique device ID, the specific platform being used, and a detailed log of every tool call the AI executes. In the context of AI agents, a tool call is the critical moment where the model moves from thinking to acting, such as reading a file, executing a shell command, or modifying code. Additionally, the system transmits skill matching information, effectively creating a high-resolution map of how the user interacts with the AI and which capabilities are most frequently leveraged.

This telemetry system operates like a flight data recorder for a developer's workflow. While Vercel presents a clear consent window regarding the collection of actual prompt text, the underlying telemetry collection is enabled by default. There is no explicit opt-in process for this tracking. The documentation explaining this behavior is not found in a primary README or a prominent privacy policy; instead, it is buried eight levels deep within the `~/.claude/plugins/cache/` directory. By placing the disclosure in a location that requires an intentional, deep-dive search to find, the information is effectively hidden from the average user.

The Architecture of a Dark Pattern

To understand why this implementation is controversial, one must compare it to standard industry practices for developer tools. For instance, Chrome DevTools utilizes session IDs that rotate every 24 hours. This approach allows the developers to identify patterns and bugs within a specific window of time without creating a permanent, lifelong profile of the individual user. The Chrome model prioritizes temporary identification, which significantly reduces the risk of long-term privacy erosion.

Vercel's approach represents a fundamental shift toward permanent tracking. The tension arises from the way the user interface handles consent. When the plugin asks if the user wants to share prompts for improvement, clicking No thanks creates a psychological sense of privacy. The user believes they have opted out of data collection. However, this choice only applies to the text of the prompts. The background telemetry, powered by the permanent UUID, continues to stream data to Vercel's servers regardless of the user's response to the prompt collection query.

In the field of user experience design, this is known as a dark pattern. It is a design choice that tricks users into believing they have more control over their privacy than they actually do. By providing a visible off-switch for one type of data (prompts) while keeping a hidden on-switch for another (telemetry), the tool creates a false sense of security. From a regulatory perspective, this is particularly problematic under the General Data Protection Regulation (GDPR) in the European Union. GDPR mandates that consent must be informed and explicit. Simply burying a disclosure in a deep cache folder does not constitute a valid notification, as the user cannot reasonably be expected to find or understand the extent of the tracking.

For developers who wish to reclaim their privacy and stop the flow of data to Vercel, the solution is not found in a menu or a settings toggle. It requires a manual intervention in the terminal to override the default behavior.

export VERCEL_PLUGIN_TELEMETRY=offApplying this environment variable is the only way to effectively sever the connection between the local device and the telemetry server. However, the fact that such a critical privacy setting requires manual shell configuration highlights a significant gap in the tool's user-centric design. Most users will never discover this command, meaning the vast majority of Claude Code installations are currently functioning as permanent tracking beacons.

The trade-off for the convenience of an AI-powered coding assistant is the creation of a permanent digital footprint that the user never explicitly agreed to maintain.