For years, the primary friction in AI-assisted development has been the copy-paste cycle. Developers identify a bug, copy three relevant files into a chat window, and hope the model understands the implicit dependencies of the rest of the codebase. When the conversation grows too long, the model inevitably loses the thread, forcing the developer to re-explain the architecture or start a fresh session. This fragmented workflow has kept local LLMs as helpful assistants rather than autonomous collaborators. This week, the release of Qwen3.6-27B signals a shift toward repository-level reasoning, moving the needle from simple code generation to genuine agentic operation on local hardware.

The Architecture of High-Speed Local Inference

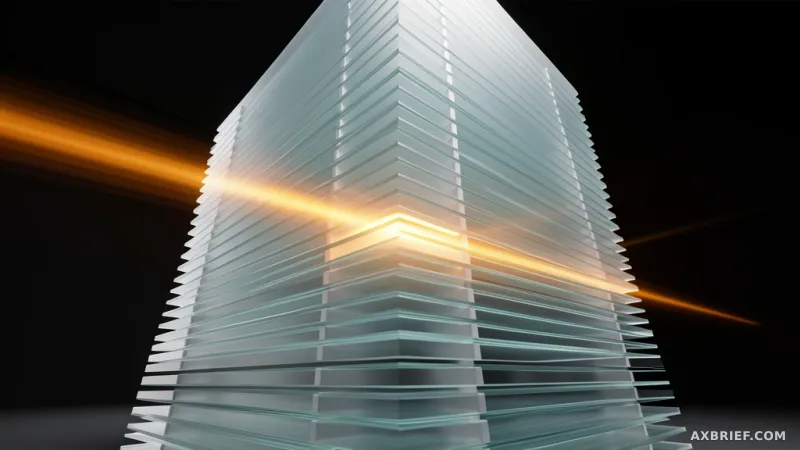

Qwen3.6-27B is a causal language model featuring 27 billion parameters, designed to balance the raw power of massive models with the accessibility of consumer-grade GPUs. The model is built with 64 layers and a hidden dimension of 5120, integrating a Vision Encoder to handle multimodal inputs. To optimize computational efficiency, the architecture employs a hybrid approach, mixing Gated DeltaNet—a form of linear attention—with Gated Attention. This combination allows the model to maintain high performance while reducing the memory overhead typically associated with long-sequence processing.

The most immediate technical advantage is the context window. Qwen3.6-27B natively handles 262,144 tokens, a capacity sufficient to ingest entire source code repositories in a single pass. For specialized use cases, this limit can be extended up to 1,010,000 tokens through configuration. However, the real breakthrough lies in the implementation of Multi-Token Prediction (MTP) for speculative decoding. While traditional models generate text one token at a time, MTP predicts multiple tokens simultaneously, effectively increasing inference speeds by 1.5x to 2x.

To deploy this capability in a local environment, developers must use a specific branch of llama.cpp. The installation process requires a clean build to enable the MTP features:

apt-get install pciutils build-essential cmake curl libcurl4-openssl-dev -y

git clone -b mtp-clean https://github.com/am17an/llama.cpp.git

cmake llama.cpp -B llama.cpp/build -DBUILD_SHARED_LIBS=OFF -DGGML_CUDA=ON

cmake --build llama.cpp/build --config Release -j --clean-first --target llama-cli llama-server

cp llama.cpp/build/bin/llama-* llama.cppOnce the environment is prepared, the MTP functionality is activated during server execution using the following command:

export LLAMA_CACHE="unsloth/Qwen3.6-27B-MTP-GGUF"

./llama.cpp/llama-server \

-hf unsloth/Qwen3.6-27B-MTP-GGUF:UD-Q4_K_XL \

-ngl 99 -c 8192 -fa on -np 1 \

--spec-type mtp --spec-draft-n-max 2The model is distributed in GGUF format, ensuring compatibility with a wide array of high-performance inference engines, including vLLM, SGLang, and KTransformers.

From Code Generation to Repository Agents

The transition from a 32k context window to 262k is not merely a quantitative upgrade; it is a qualitative shift in how AI interacts with software. In frontend development, for example, a single feature change often ripples across multiple components, state management stores, and CSS modules. By processing the entire repository, Qwen3.6-27B can map these complex dependency chains accurately, identifying the exact scope of impact for a proposed change without requiring the developer to manually feed it every related file.

Beyond the window size, the model addresses the chronic issue of context drift. Most LLMs suffer from a degradation of reasoning as the conversation length increases, often forgetting the logic established in the initial prompts. Qwen3.6-27B introduces a mechanism to preserve the reasoning context of previous messages. This allows for an iterative refinement process where the AI and developer can polish code over dozens of turns without the model losing sight of the original architectural constraints.

This capability is further augmented by improved tool-calling precision. The model demonstrates a significantly higher success rate in parsing nested object structures, which is critical for interacting with complex APIs and external development tools. When a model can reliably handle nested JSON and maintain a global view of the project, it ceases to be a text generator and becomes an agent capable of manipulating the development environment.

The strategic value of the 27B parameter size becomes clear here. It is small enough to run on a single high-end local GPU, yet it is optimized to deliver performance that rivals models in the 70B+ parameter class. By combining this efficiency with MTP-driven speed, the model removes the latency barrier that previously made local agentic workflows feel sluggish compared to cloud-based alternatives.

Local hardware can now sustain the speed and memory requirements necessary to run a fully autonomous coding agent without sacrificing privacy or incurring API costs.