The legal industry has spent the last year in a state of cautious anxiety. While the promise of large language models suggested a world where document review took seconds instead of weeks, the reality was often a nightmare of hallucinated case law and generic advice that lacked the nuance of a specific jurisdiction. This week, the tension shifted. A sudden surge of activity across legal AI repositories on GitHub signals a move away from simple chat interfaces toward something more structural. At the center of this shift is Claude for Legal, a deployment that attempts to solve the reliability gap not through better prompting, but through a rigorous agentic infrastructure.

The Architecture of Managed Agents and Legal Connectors

Anthropic has introduced a suite of reference agents, specialized skills, and data connectors designed specifically for legal workflows. The deployment flexibility is a core component of the release. Users can integrate these tools via Claude Cowork, the collaborative interface, or Claude Code, the terminal-based developer tool. For enterprises requiring deeper integration, the Managed Agents API allows firms to deploy these agents directly into their own proprietary workflow engines. The barrier to entry is intentionally low; the `QUICKSTART.md` guide enables a full installation in 60 seconds, utilizing markdown and JSON configurations to bypass traditional, heavy build stages.

For the developers building these systems, the operational logic resides in the `agent.yaml` configuration file and the implementation of event loops via `scripts/orchestrate.py`. A significant addition is the `callable_agents` preview feature, which introduces a single-level delegation structure, allowing one agent to hand off specific tasks to another specialized agent. This modularity is supported by MCP Connectors, a standardized protocol that links the model to external, high-fidelity data sources. The current ecosystem includes connectors for CourtListener, the open database for US court records, as well as Trellis, Descrybe, and Solve Intelligence for advanced legal research and document analysis.

Beyond research, the system introduces a layer of scheduling agents to handle the administrative drudgery of legal practice. These include the regulatory feed monitor, renewal watcher, docket watcher, diligence grid, and launch radar. These agents operate behind a proprietary orchestrator and are deployed via the `/v1/agents` endpoint using POST requests, transforming the AI from a reactive chatbot into a proactive monitor of legal deadlines and regulatory shifts.

From Generic Prompting to Practice-Specific Profiles

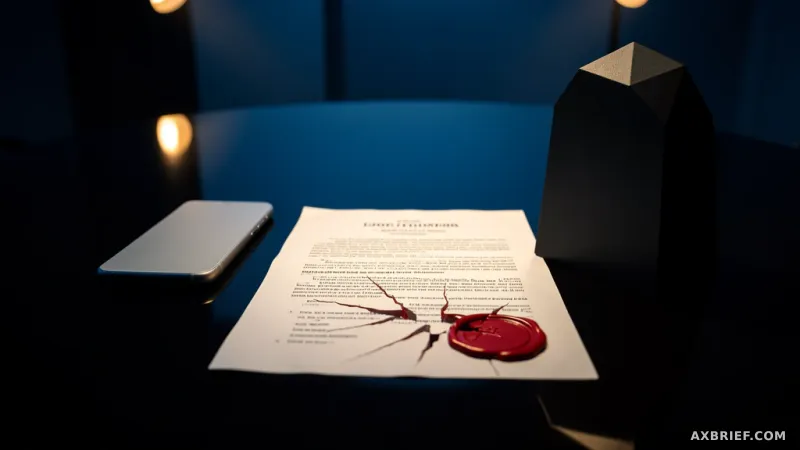

The fundamental difference between Claude for Legal and a standard LLM implementation lies in the rejection of the blank prompt. In a typical AI interaction, a lawyer asks a question and hopes the model's general training suffices. Claude for Legal replaces this with a mandatory cold start interview. This 10-to-20 minute onboarding process requires the user to provide seed documents, such as signed Master Service Agreements (MSAs) or internal firm playbooks. The AI uses this data to construct a practice profile, effectively stripping away the generic nature of the model's responses to align with the specific stylistic and legal standards of a particular team. Without this profiling phase, the system defaults to general outputs, making the distinction between a generic tool and a professional asset clear.

This shift extends to how the system handles the industry's greatest fear: the fake citation. Rather than relying on the model's internal weights to recall a case, the system employs a verification loop. Any citation generated without a linked research connector is automatically flagged with a `[verify]` tag, signaling to the lawyer that the information is unconfirmed. When a connector is active, the system cross-references the database in real-time and attaches a verification tag. If the result is generated in a vacuum, a reviewer note is placed at the top of the document, explicitly stating that the sources have not been verified.

Governance is handled through the `legal-builder-hub`, a centralized repository for verifying the reliability of legal AI skills. Access is strictly controlled via an allowlist. Non-legal professionals attempting to install these skills are redirected to a supervising attorney rather than being given a download button. Furthermore, every community-contributed skill must undergo a design review process via `/legal-builder-hub:skills-qa`. This is not a simple software test but a quality assurance process where attorneys review the underlying logic and code to ensure legal accuracy.

This is no longer a race to see which AI can sound most like a lawyer. It is an infrastructure war to determine which system can most effectively accelerate a lawyer's review process while keeping the human professional as the sole point of legal accountability.