Kimi K2.6 is an open-source Mixture-of-Experts model that leverages a 1 trillion parameter architecture to orchestrate autonomous agent swarms for complex software engineering. For the past year, the developer community has lived in a cycle of prompt and response, treating AI as a sophisticated autocomplete tool that requires constant human steering. The workflow is fragmented: a developer asks for a function, copies the code, finds a bug, pastes the error back into the chat, and repeats the process until the feature works. This manual orchestration is the primary bottleneck in AI-assisted development, turning the AI into a talented but directionless intern rather than a lead engineer.

The Architecture of a Trillion Parameters

Kimi K2.6 addresses this bottleneck through a massive Mixture-of-Experts (MoE) framework. While the total parameter count reaches 1 trillion, the model avoids the computational collapse associated with such scale by activating only 32 billion parameters during any single inference step. This selective activation allows the model to maintain the broad knowledge base of a trillion-parameter giant while operating with the latency and efficiency of a much smaller model. The architecture consists of 61 layers, including one dense layer, designed to balance specialized knowledge with general reasoning.

To handle the massive amounts of data required for autonomous agency, the model implements Multi-head Latent Attention (MLA). This mechanism significantly reduces memory overhead, enabling a context window of 256K tokens. This capacity allows the model to ingest entire codebases or massive documentation sets without losing the thread of the conversation. The visual processing is handled by MoonViT, a native multimodal visual encoder with 400 million parameters, which allows Kimi K2.6 to interpret images and UI layouts as primary data rather than secondary descriptions.

Under the hood, the model utilizes the SwiGLU activation function and a vocabulary size of 160,000 tokens to ensure high fidelity across multiple programming languages and natural languages. The MoE structure is further refined by a pool of 384 experts, with 8 experts selected per token to generate the most precise output possible. This granular specialization is what allows the model to switch seamlessly between high-level architectural planning and low-level syntax debugging.

From Chatbots to Autonomous Agent Swarms

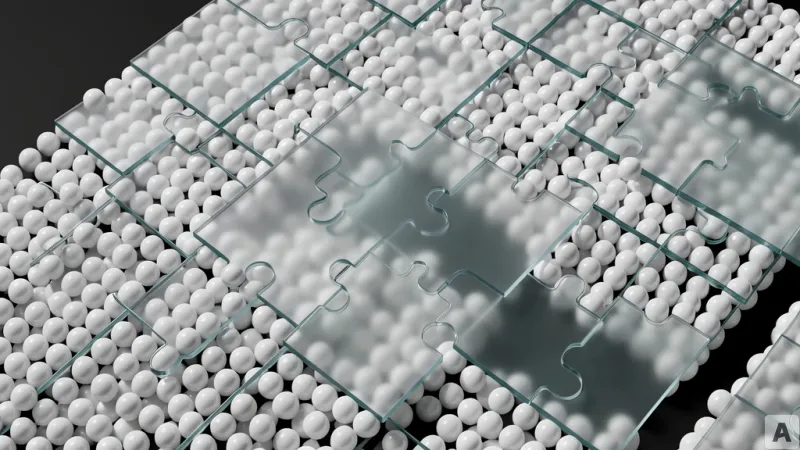

The true shift in Kimi K2.6 is the transition from a single-model response to an agent swarm. Most current LLMs attempt to solve a problem in a linear path, which often leads to hallucinations when the task complexity exceeds the model's immediate reasoning window. Kimi K2.6 instead expands horizontally, deploying up to 300 sub-agents that collaborate across as many as 4,000 individual steps. This is not simple iteration; it is a hierarchical orchestration where sub-agents can plan, execute, verify, and correct each other's work in a loop.

This swarm capability transforms the output from a snippet of code into a finished product. When tasked with building a website, the model does not just generate HTML and CSS. It coordinates a workflow that handles the full-stack implementation, including interactive elements and complex animations, converting visual inputs directly into functional interfaces. The result is a shift from code generation to system delivery.

The empirical data supports this architectural leap. On the SWE-bench Verified benchmark, which measures a model's ability to resolve real-world software engineering issues, Kimi K2.6 achieved an 80.2% success rate, improving upon the 76.8% recorded by Kimi K2.5. The most striking divergence appears in the DeepSearchQA benchmark. Kimi K2.6 reached an f1-score of 92.5%, significantly outpacing GPT-5.4 at 78.6% and Gemini 3.1 Pro at 81.9%. This suggests that the agent swarm approach is far more effective at deep information retrieval and synthesis than the monolithic reasoning paths used by its competitors.

Reasoning capabilities extend into mathematics and hard sciences as well. The model scored 96.4% on the AIME 2026 benchmark and 90.5% on GPQA-Diamond. When utilizing Python for visual reasoning in the MathVision benchmark, it achieved 93.2%, proving that it can analyze complex diagrams and formulas with near-human precision. By combining these benchmarks, it becomes clear that the model is no longer just predicting the next token but is managing a multi-step cognitive process.

This capability removes the human from the loop of basic orchestration. Instead of managing the AI, the developer now manages the objective, while the AI manages the agents, the environment, and the execution. The model operates as a 24-hour autonomous coordinator, capable of scheduling tasks and executing code in the background without manual intervention.

Software development is moving away from the era of the assistant and into the era of the autonomous architect.