Developers attempting to run large language models within WebAssembly sandboxes usually hit a wall known as the sandbox tax. Every time a model needs to move data from the isolated Wasm environment to the GPU for acceleration, the system triggers a memory copy operation. This movement creates a persistent bottleneck of latency and memory duplication that degrades response times and wastes precious VRAM. For years, the industry has accepted this trade-off as the price of security and portability, treating the gap between the virtual machine and the hardware accelerator as an inevitable friction point.

The Mechanics of UMA and Wasmtime Integration

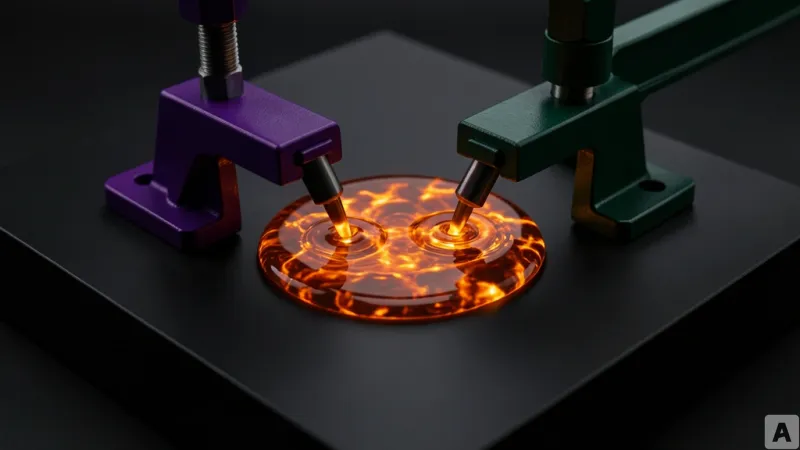

To eliminate this overhead, a new implementation leverages the Unified Memory Architecture (UMA) of Apple Silicon, where the CPU and GPU share a single pool of physical memory. This approach removes the need for data duplication by establishing a direct memory bridge through three specific technical layers. First, the system utilizes the `mmap` system call with `MAP_ANON | MAP_PRIVATE` flags to secure a memory address aligned to 16KB. This ensures the memory is page-aligned and accessible to the hardware.

Second, the implementation uses the Metal framework, specifically the `MTLDevice.makeBuffer(bytesNoCopy:length:)` function. This allows the GPU to wrap the existing pointer created by `mmap` into a GPU buffer without copying the underlying data. Third, the Wasmtime runtime is modified via a custom `MemoryCreator` interface. Instead of allowing the runtime to handle memory allocation internally through standard calls, the `MemoryCreator` forces Wasmtime to use the pre-allocated, aligned memory region.

Technical validation of this pipeline began with a General Matrix Multiplication (GEMM) shader performing 128x128 matrix operations. The test verified that all 16,384 elements remained error-free, and a pointer identity check confirmed that the `MTLBuffer.contents()` pointer was identical to the original `mmap` pointer. The impact on physical memory was stark. In a standard explicit copy path, the Resident Set Size (RSS) increased by 16.78MB. In the zero-copy path, the RSS increase was a negligible 0.03MB. This architecture was then scaled to a real-world scenario using a 2021 M1 MacBook Pro, running a 4-bit quantized Llama 3.2 1B model (695MB) integrated with the MLX framework.

Solving the Impedance Mismatch of GPU Acceleration

This shift reveals a fundamental architectural divide between discrete GPU environments and unified systems. In a traditional setup, data must travel from the Wasm sandbox to the host memory, and then across the PCIe bus to the GPU's dedicated VRAM. This creates a double-copy penalty and double the latency, representing a structural impedance mismatch between the isolated virtual machine and the hardware accelerator. Because Apple Silicon lacks a physical bus between the CPU and GPU, the GPU can read the exact same pointer the CPU is using.

While the reduction in latency is beneficial, the true breakthrough lies in memory efficiency, particularly regarding the KV Cache. In Transformer-based models, the KV Cache stores the context of previous tokens to avoid redundant calculations. As conversations grow longer, this cache can expand to several hundred megabytes. In a copy-based system, the memory footprint for this cache effectively doubles because the data must exist in both the sandbox and the GPU buffer. By implementing zero-copy, this duplication is entirely removed.

This efficiency change transforms the economics of deploying local AI. If the memory overhead is eliminated, a developer can theoretically double the number of concurrent AI agents running on the same hardware without increasing the RAM requirement. Furthermore, the cost of the host function boundary during Wasm-to-GPU dispatch has become statistically insignificant compared to the actual inference time. The bottleneck is no longer the movement of data, but the computation itself.

The removal of physical memory constraints evolves the Wasm sandbox from a restrictive isolation chamber into a high-performance control plane for hardware acceleration.