A developer recently attempted to build a system that connects real-time sensor data directly to a Large Language Model for instant analysis. The project revealed a recurring friction point in modern AI architecture: the grueling journey data takes from the storage layer, through the backend, and finally into the model. In a world where time-series data streams in at thousands of points per second, the latency introduced by these hops is not just a technical nuisance but a critical failure point that degrades the quality of the entire service.

The Architecture of Integration

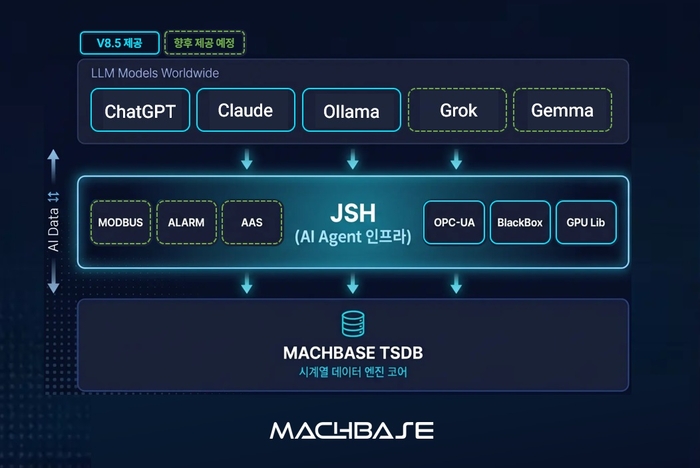

Markbase is addressing this bottleneck by fundamentally expanding the role of the time-series database. In its latest evolution, the platform has introduced an internal script execution environment. This transition transforms the database from a passive container that merely holds values into an active system capable of executing specific commands based on the data it stores. By moving the logic into the database itself, Markbase eliminates the need to export massive datasets to an external processing layer before they can be utilized.

To support this active role, Markbase has integrated REST API and MQTT connectivity directly into its core. The inclusion of MQTT is particularly significant for IoT environments, as it allows the database to receive signals from low-power devices and push processed results back to external endpoints without an intermediary middleware layer. This creates a closed-loop system where the database acts as the primary communication hub.

Furthermore, the system has expanded its data flexibility. While time-series databases traditionally excel at structured numerical data, Markbase now incorporates native JSON processing capabilities. This allows developers to handle diverse log formats and sensor values within a single environment, ensuring that the context of the data is preserved regardless of its original structure.

The Collapse of the Data Pipeline

For years, the industry has adhered to a strict separation of concerns. The database was the warehouse, the backend language like Python was the kitchen where data was prepared, and the LLM was the server delivering the final result. This N-tier architecture creates a systemic inefficiency where the cost of moving the ingredients from the warehouse to the kitchen often outweighs the cost of the cooking itself.

Markbase effectively collapses this pipeline by building the kitchen and the serving desk inside the warehouse. When a database functions as a runtime, the data no longer needs to be extracted, transported, and re-loaded. Instead, scripts run locally on the data, and APIs communicate results in real-time. This shift fundamentally changes the economics of AI agents, particularly in IoT settings where the absolute performance of a model is often less important than the context connection cost.

In these high-velocity environments, the ability to quickly link streaming data to a model's context window determines the utility of the AI. By processing data internally, Markbase reduces network overhead and slashes response times. The database is no longer a static memory bank but an execution environment that allows AI agents to make decisions based on the immediate state of the physical world.

This evolution signals a broader shift in infrastructure where the boundary between storage and compute disappears, turning the database into the functional body of the AI.