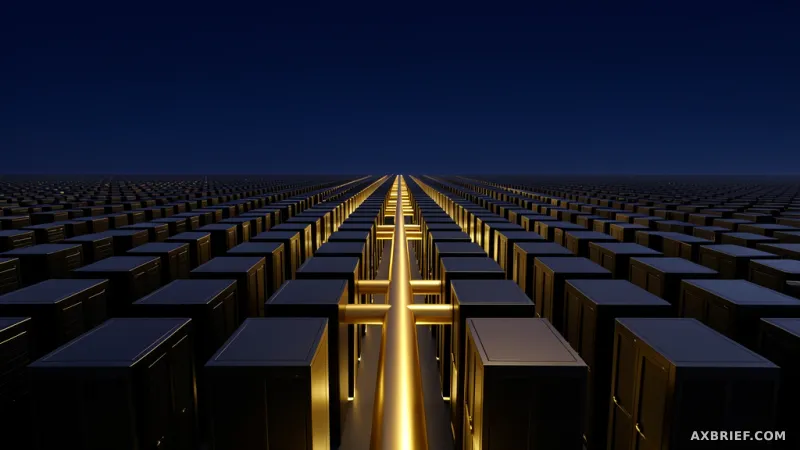

Modern machine learning engineers are hitting a wall that has nothing to do with the number of parameters in their models or the quality of their datasets. In the current race to build foundation models, the community is discovering that adding more GPUs to a cluster often yields diminishing returns. This phenomenon occurs because as the compute scale grows, the communication overhead between thousands of chips becomes the primary constraint. When data packets stall or collide across a network, the most expensive GPUs in the world sit idle, waiting for the next batch of gradients to arrive. This communication bottleneck has transformed infrastructure design from a secondary concern into the central challenge of AI development.

The Architecture of AWS P-Series Compute

To address these scaling challenges, AWS provides a specialized hierarchy of virtual servers designed specifically for the lifecycle of a foundation model, covering pre-training, post-training, and inference. The P5 instance family serves as the established baseline, with the `p5.48xlarge` model featuring eight NVIDIA H100 GPUs. However, the transition to the P6 family marks a significant leap in raw computational density. The P6 lineup introduces the `p6-b200.48xlarge` and `p6-b300.48xlarge` instances, which leverage the latest NVIDIA Blackwell B200 and B300 architectures. These chips are engineered to handle the massive throughput required for trillion-parameter models, focusing heavily on increasing the capacity and bandwidth of High Bandwidth Memory (HBM).

Performance in these environments is measured by FLOPS, or floating-point operations per second. The P6 instances demonstrate a massive increase in operational density compared to the P5 generation, particularly when utilizing lower-precision formats like BF16 or FP8. Because model size is growing faster than memory speeds, the ability to move data efficiently within the chip and across the memory bus is now the primary variable determining overall training throughput.

This hardware layer is supported by a sophisticated open-source software ecosystem that manages the complexity of distributed workloads. At the cluster management level, Slurm and Kubernetes handle resource scheduling and container orchestration to ensure that workloads are distributed evenly across the fleet. The actual model development and distributed training are implemented using frameworks like PyTorch and JAX, which allow developers to define how tensors are split across multiple GPUs. To maintain system health, an observability layer consisting of Prometheus for metric collection and Grafana for data visualization provides real-time diagnostics on GPU utilization and network health.

From Raw Compute to Network Fabric Dominance

For years, the industry focused on intra-server communication, ensuring that GPUs within a single chassis could talk to each other at lightning speed. This is handled by NVLink and NVSwitch, which facilitate collective communication patterns like All-reduce, where the results of calculations from all GPUs are summed and redistributed. While NVLink is highly efficient, it is limited to the physical confines of a single server. The real challenge arises when a model is so large that it must be spread across thousands of servers, turning the entire data center into a single, massive computer.

This is where the Elastic Fabric Adapter (EFA) changes the equation. EFA is not a standard network interface; it is a specialized tool that utilizes the Scalable Reliable Datagram (SRD) protocol to distribute data packets across multiple paths, avoiding the congestion that plagues traditional TCP/IP networks. The critical innovation here is the implementation of OS-bypass RDMA (Remote Direct Memory Access). By using the Libfabric API, EFA allows the GPU to communicate directly with the memory of a remote server without involving the operating system kernel. This removes the CPU from the critical path, slashing latency and preventing the kernel from becoming a bottleneck during massive data transfers.

For the developer, this architectural shift manifests as a reduction in step time, the duration it takes to complete one iteration of training. In large-scale distributed learning, the time spent on actual computation is often dwarfed by the time spent waiting for network synchronization. By deploying these instances within Amazon EC2 UltraClusters, which group thousands of instances into a low-latency fabric, AWS minimizes this synchronization delay. The result is a higher hardware utilization rate, meaning the expensive B200 and B300 GPUs spend more time calculating and less time idling.

The competitive edge in AI is no longer found solely in the elegance of an algorithm, but in the physical density and efficiency of the hardware interconnects.