Developers building the next generation of AI agents have hit a frustrating wall this week. The community is no longer struggling with the quality of the output, but with the agonizing silence between steps. When a developer moves beyond a simple chatbot to an agent capable of autonomous planning and tool use, they encounter a recurring bottleneck: the multi-step loop. Every time an agent must reason, plan, and then execute a command, the cumulative latency destroys the user experience. GitHub issue tabs for agent-related repositories are currently flooded with requests for optimization, as the industry realizes that software tweaks can only do so much to mask the physical delays of current hardware.

The Specialized Architecture of TPU 8i and TPU 8t

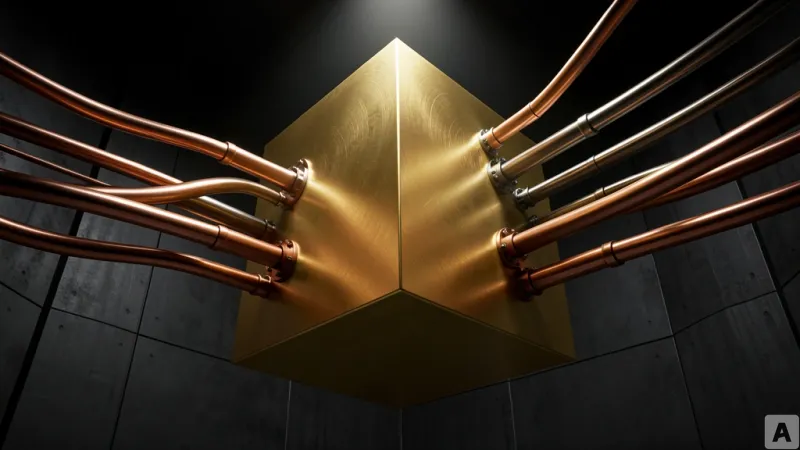

Google is addressing this infrastructure gap by bifurcating its Tensor Processing Unit strategy into two distinct chips: the TPU 8i and the TPU 8t. The TPU 8i is engineered specifically for Agentic AI, a class of models that do not simply respond to prompts but autonomously set goals and execute workflows. The primary objective of the 8i is to accelerate the specific sequence of reasoning, planning, and multi-step execution. By optimizing the hardware for these iterative cycles, Google aims to eliminate the stuttering response times that currently plague autonomous agents, creating a seamless flow between the AI's internal thought process and its external actions.

Parallel to the inference-focused 8i is the TPU 8t, a chip dedicated to the grueling demands of model training. The 8t is designed to handle the most complex model architectures by utilizing a single, massive memory pool. This shared memory architecture allows multiple processors to access a vast area of data without the traditional bottlenecks associated with distributed memory across different chips. To support this, Google has deployed a full-stack infrastructure that integrates everything from the physical networking and data center layout to an energy-efficient operating system. Together, the 8i and 8t form a complementary engine: one that builds the intelligence through massive memory efficiency and another that deploys that intelligence through high-speed agentic reasoning.

From Parameter Racing to Loop Optimization

This hardware split signals a fundamental shift in the AI paradigm. For the past few years, the industry has been locked in a performance race centered on throughput and parameter count. The goal was simple: process more data, faster, to create a more knowledgeable chatbot. In that era, the interaction was a one-shot deal. A user asked a question, the model performed a single inference pass, and it delivered an answer. The hardware was optimized for this linear path.

Agentic AI changes the geometry of the problem. An agent does not operate in a straight line; it operates in a loop. It sets a goal, calls an external tool, analyzes the result, and then revises its plan based on that feedback. This iterative cycle means that latency is no longer a minor inconvenience—it is the primary constraint on the agent's utility. If each turn of the loop takes several seconds, the agent becomes unusable for real-time tasks. The TPU 8i is the first major hardware attempt to optimize for the rotation speed of this loop rather than the raw volume of the data processed.

While the TPU 8i handles the speed of the loop, the TPU 8t removes the memory constraints that previously limited how complex these agentic logic structures could be. By separating the needs of training and inference at the silicon level, Google is acknowledging that the intelligence required to plan a complex task is different from the speed required to execute it. The tension has shifted from how large a model can be to how responsive its reasoning process feels to the end user. This transition suggests that the era of the static chatbot is ending, replaced by an era of active agents where the hardware must mirror the cognitive workflow of reasoning-planning-execution.

AI competition has moved beyond the battle of model sizes and into the realm of hardware-level workflow optimization.