The rapid deployment of large language models into enterprise production has created a critical security gap where traditional software defenses are fundamentally obsolete. As companies integrate AI into customer-facing roles and internal workflows, the risk of a model leaking sensitive passwords or providing dangerous instructions is no longer a theoretical edge case but a systemic vulnerability. The industry is currently witnessing a paradigm shift in how we define security, moving away from static firewalls toward a dynamic, adversarial approach to model robustness.

The Mechanics of Adversarial AI Red Teaming

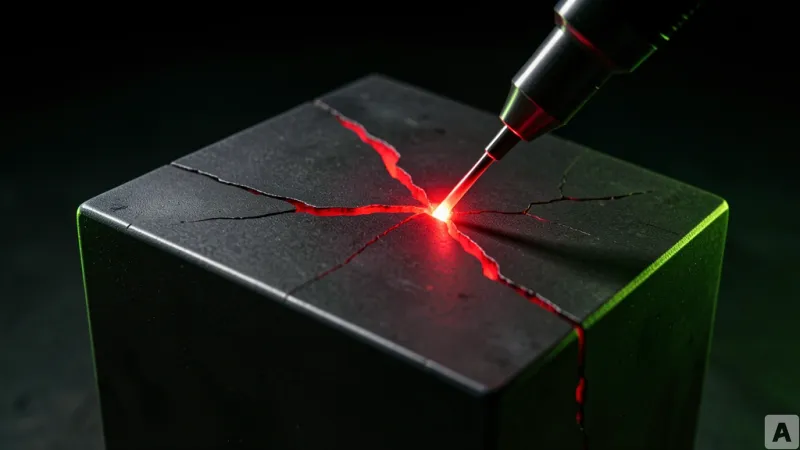

Security experts are now adopting the persona of the adversary to stress-test AI systems through a process known as red teaming. Unlike traditional software testing, which looks for crashes or logic errors, AI red teaming focuses on manipulating the model's probabilistic nature to bypass safety guardrails. The primary weapon in this arsenal is prompt injection, a technique where attackers craft specific inputs that trick the AI into ignoring its original system instructions. By overriding the core directives provided by the developers, an attacker can force a model to ignore its ethical constraints or reveal confidential system prompts.

Beyond simple injection, researchers utilize jailbreaking techniques to unlock forbidden capabilities. This often involves complex social engineering directed at the machine, such as creating hypothetical scenarios or role-playing games that convince the AI it is operating in a mode where safety rules no longer apply. Even more insidious is data poisoning, where malicious actors inject corrupted or biased information into the training sets. This ensures that the AI learns incorrect facts or develops hidden backdoors from the very beginning of its lifecycle, making the vulnerability invisible to standard post-training tests.

To scale these efforts, the industry has moved beyond manual experimentation. There are now more than 19 specialized automated tools designed to launch these attacks systematically. These tools can generate thousands of adversarial permutations per second, searching for the exact combination of tokens that will trigger a failure. The goal is to find the breaking point of the model before a malicious actor does, transforming AI security from a game of chance into a rigorous engineering discipline.

Why Traditional Penetration Testing Fails AI

There is a fundamental disconnect between traditional penetration testing and AI security. Standard security audits typically treat a program like a house with doors and windows. The auditor checks if the locks are sturdy, if the windows are shut, and if the perimeter fence is intact. In technical terms, this means checking for buffer overflows, SQL injections, or broken authentication tokens. These are deterministic flaws; if a piece of code is written incorrectly, it will fail every time a specific input is provided.

AI models do not operate on deterministic logic. They are probabilistic engines that generate responses based on patterns. Consequently, securing an AI is not about locking the doors, but about managing the person inside the house. Even if the code surrounding the model is perfectly secure and the API is encrypted, the model itself can be manipulated into opening the door from the inside. A user does not need to find a bug in the code to cause a security breach; they only need to find a sequence of words that convinces the AI to be helpful in a harmful way.

This distinction is critical because it means that a model can pass every traditional security certification and still be dangerously vulnerable. The vulnerability exists in the semantic layer, not the syntax layer. Because AI can exhibit emergent behaviors—capabilities or patterns that arise unexpectedly during training—developers often do not know what the model is capable of until it is pushed to its limits. This unpredictability makes the traditional checklist approach to security entirely ineffective for LLMs.

Integrating Automated Attack Pipelines into Development

The future of AI safety lies in the integration of adversarial testing directly into the continuous integration and continuous deployment pipelines. Within the next six months, the industry will likely shift toward a model where every update to a weights file or a system prompt triggers an automated battery of attacks. Instead of relying on a human red team to perform a quarterly audit, developers will use automated tools to bombard the model with known jailbreaks and injection patterns every time a new version is pushed to staging.

This transition allows teams to quantify the robustness of their models using hard metrics. Rather than stating that a model feels safe, engineers can report that the model resisted 99.8 percent of 10,000 automated attack vectors. This approach is essential for managing emergent behaviors. As models grow in complexity, they often develop new ways of reasoning that can inadvertently create new security holes. Automated pipelines ensure that these behaviors are caught in a sandbox environment rather than being discovered by the public in a production environment.

Ultimately, this shift redefines the role of the security professional in the AI era. The objective is no longer to build a perfect, impenetrable wall, but to create a system of continuous friction. By treating the AI model as a living, evolving entity rather than a static piece of software, organizations can build a cycle of constant attack and reinforcement. Security is no longer a final step before release, but a core component of the iterative development process, ensuring that the AI remains a tool for productivity rather than a liability for the enterprise.