The scene is familiar to almost every enterprise development team this year. A chatbot demo performs flawlessly in a controlled environment, impressing stakeholders with its ability to reason and converse. However, the moment the team attempts to integrate that AI into a live payment system or a legacy customer management database, the illusion shatters. A single network timeout or a momentary API glitch doesn't just cause a delay; it crashes the entire process, leaving the system in an inconsistent state and the developers scrambling to figure out where the logic failed. This is the fragile gap between a successful proof of concept and a production-ready business process where real capital is at stake.

The Infrastructure of Agentic Reliability

Mistral AI, the French AI powerhouse currently valued at 11.7 billion euros, is attempting to bridge this gap with the release of Workflows. Now available in public preview as part of the Mistral Studio platform, Workflows is designed as an orchestration layer that allows companies to move beyond simple chat interfaces and into complex, multi-step AI automation. The timing is critical. Market projections suggest the agentic AI sector—systems capable of setting their own goals and utilizing tools autonomously—will swell to 199 billion dollars by 2034. Yet, the industry faces a looming crisis: analysts predict that over 40% of these projects will be abandoned by 2027 due to overwhelming complexity and unsustainable operational costs.

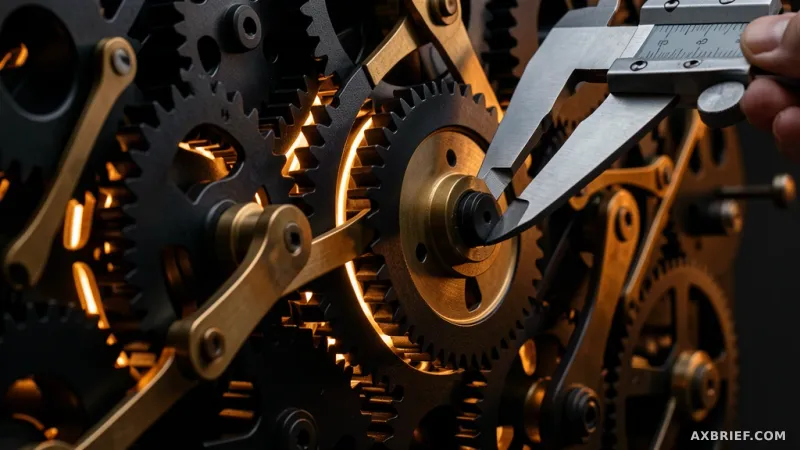

To combat this instability, Mistral AI did not build from scratch. Instead, they anchored Workflows on Temporal, a durable execution engine valued at 5 billion dollars. Temporal is already the invisible backbone for some of the world's most demanding technical infrastructures, powering orchestration for companies like Netflix, Snap, JPMorgan Chase, Stripe, and Salesforce. By integrating Temporal, Mistral AI ensures that if a workflow is interrupted by a system failure, it does not need to restart from the beginning. Instead, it resumes exactly where it left off, preserving the state of the operation and eliminating the risk of duplicate transactions or lost data in critical business pipelines.

The Shift Toward Code-Centric Sovereignty

While much of the current AI tooling trend leans toward no-code interfaces and drag-and-drop visual builders, Mistral AI has taken a deliberate turn in the opposite direction. Workflows is built explicitly for developers, utilizing Python as its primary design language. This choice reflects a fundamental understanding of enterprise requirements: in sectors like financial services, freight logistics, or legal compliance, a visual flowchart is insufficient. These industries require the precision, version control, and auditability that only a code-based approach can provide. Once a developer defines a workflow in Python, it can be deployed to Le Chat, Mistral's conversational platform, making sophisticated automated processes accessible to the entire organization.

This architectural shift extends to how data is handled, addressing one of the biggest hurdles in enterprise AI adoption: data sovereignty. Traditional AI workflows often require sensitive data to be moved into the cloud where the model resides. Mistral AI has decoupled the orchestration from the execution. In this new model, the cloud acts as the conductor, sending instructions, while the actual execution happens on the customer's internal servers where the data lives. The data never has to cross the security perimeter of the organization, making the system viable for highly regulated industries that cannot risk leaking proprietary information to a third-party cloud.

Technically, this ecosystem rests on three primary pillars. First is a developer kit that allows for rapid logic implementation in Python, featuring native support for the Model Context Protocol (MCP). This standard allows AI systems to connect seamlessly with external tools, giving the agent the ability to control software and databases directly. Second is the enhanced durability provided by the Temporal engine, which Mistral has extended to better handle streaming and payload processing. This ensures that even in the event of a catastrophic server failure, the process remains resilient.

Third is the integration of OpenTelemetry, the industry standard for observability. By baking OpenTelemetry into the core of Workflows, Mistral AI allows developers to track every decision an AI agent makes in real-time via the Studio. When a process fails, the developer can see exactly which step triggered the error and why, rather than guessing based on an opaque model output. To further streamline deployment, Mistral provides built-in connectors for common enterprise tools like CRM and ticketing systems, alongside integrated authentication and secret management to handle API keys securely.

The competitive landscape of artificial intelligence is shifting. The primary battle is no longer just about which model possesses the highest reasoning capability or the largest context window, but rather which infrastructure can keep that intelligence running without interruption.