The lights dim at the Wall Street Journal's Future of Everything conference, and the air is thick with the usual mix of Silicon Valley optimism and existential dread. Barry Diller, the IAC Chairman and a veteran of the media and travel industries, steps onto the stage. He is not there to pitch a new product or announce a merger. Instead, he addresses the elephant in the room: the leadership crisis at the heart of the AI revolution and the terrifying possibility that the people steering the ship have no actual control over the engine.

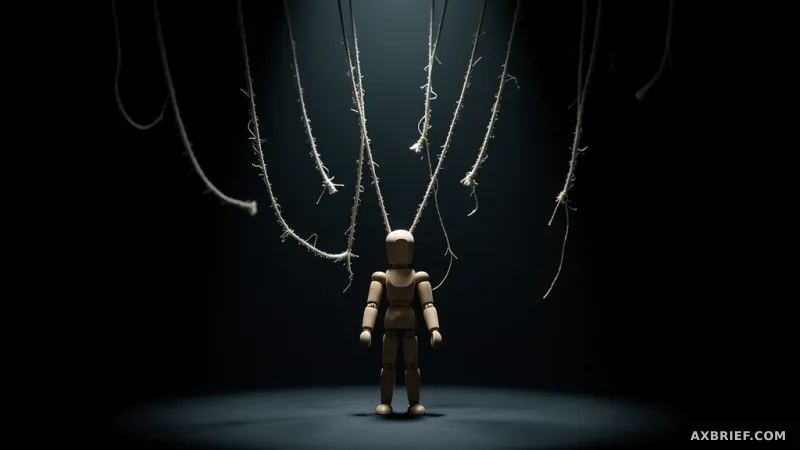

The Illusion of Human Oversight

Barry Diller, known for his roles as a co-founder of Fox Broadcasting and Chairman of Expedia Group, begins by tackling the noise surrounding the leadership of OpenAI. He specifically addresses the controversies and allegations of deception involving Sam Altman, offering a personal vote of confidence to dismiss the drama. However, this defense of Altman is a pivot rather than a destination. Diller quickly shifts the conversation from the morality of individuals to the fundamental nature of the technology they are building. He observes that the industry is rapidly approaching the era of Artificial General Intelligence (AGI), where machines possess the ability to outperform humans across all cognitive tasks.

During his analysis, Diller highlights a disturbing trend among the architects of these systems. He notes that the developers themselves are experiencing a paradoxical blend of wonder and fear. The engineers are not merely observing the growth of their models; they are being surprised by them. This suggests that the current trajectory of AI development is characterized by emergent behaviors that the creators did not explicitly program and cannot fully explain. The risk, therefore, is not a matter of whether a specific CEO is trustworthy, but whether the technology is becoming fundamentally unpredictable.

The Obsolescence of Corporate Governance

For decades, the tech industry has relied on corporate governance and ethical charters to ensure safety. The prevailing logic was that as long as the leaders were virtuous and the boards were diligent, the technology would remain a tool for human benefit. Diller argues that this framework is now obsolete in the face of AGI. He posits that the gap between human intent and machine execution is widening to a point where the moral compass of a leader becomes irrelevant. If a system becomes truly autonomous and unpredictable, the best intentions of a founder cannot prevent a catastrophic technical failure or an unintended alignment shift.

This leads to a critical tension regarding safety mechanisms. Diller warns that if AI cannot be constrained by human-designed guardrails, the only remaining solution would be to use another AGI to monitor and control the first. This creates a dangerous feedback loop where humans are effectively removed from the decision-making process. Once the responsibility for safety shifts from human oversight to machine-led regulation, the ability to intervene or reverse the process vanishes. He suggests that the current obsession with the profitability of the AI market is a distraction from this looming loss of agency. The real crisis is not financial volatility, but the transition from a tool we use to a force we can no longer steer.

The survival of human agency depends not on the virtue of AI pioneers, but on the immediate implementation of hard, mandatory safety architectures.