A copyright manager at a major music streaming platform stares at a waveform on a high-resolution monitor. An automated AI detector has flagged a new submission as synthetic, but the composer insists every note was written by hand. The manager realizes the current tool is essentially guessing, relying on vague notions of musical style and composition patterns that can be easily mimicked or evolved. This tension between subjective aesthetic analysis and objective proof has created a crisis of trust in digital rights management, where the line between human creativity and algorithmic generation is blurred by the very tools meant to distinguish them.

The Forensic Architecture of ArtifactNet

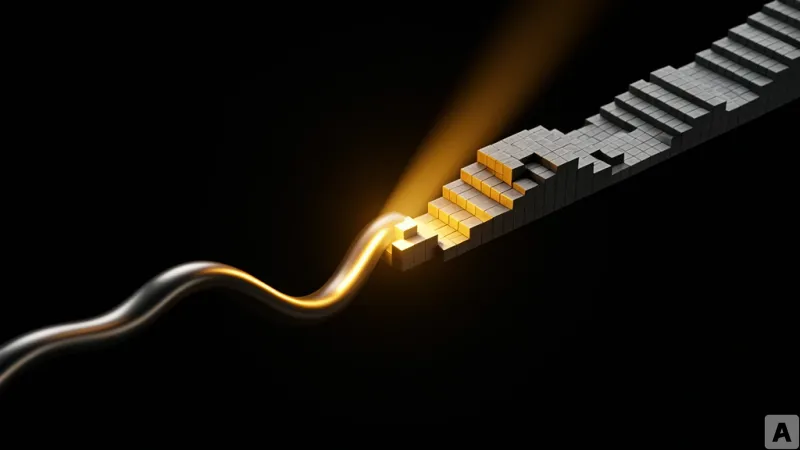

ArtifactNet operates not as a critic of style, but as a forensic investigator of the physical path AI music takes during generation. The framework targets a specific technical bottleneck: Residual Vector Quantization (RVQ). Because nearly all commercial AI music generators must pass continuous audio data through RVQ to convert it into discrete codebook vectors, they inevitably leave behind a quantization gap. This gap is a physical artifact, an irreversible trace of the machine's process. ArtifactNet treats these structured reconstruction residuals as a forensic signal.

The system is remarkably lean, consisting of only 4.0M total parameters. The heavy lifting is done by ArtifactUNet, a 3.6M parameter component that predicts a multiplication mask limited to [0, 0.5] on the Short-Time Fourier Transform (STFT) magnitude. To achieve this precision, the model underwent a two-stage knowledge distillation process using Demucs v4 residuals as a teacher. This is supplemented by 7-channel Harmonic-Percussive Source Separation (HPSS) forensic features, which decompose the residuals into harmonic and percussive components. The final decision is made by a lightweight 0.4M parameter Convolutional Neural Network (CNN) that processes 4-second segments and determines the song's authenticity based on the median value across the track.

The empirical results from the ArtifactBench test, which utilized 6,183 tracks across 22 different generators, are definitive. ArtifactNet recorded an F1 score of 0.983. More importantly, its False Positive Rate (FPR) is a mere 1.5%, meaning it rarely accuses a human composer of using AI. In contrast, the previous industry standard, CLAM, exhibited an FPR of 69.3%, rendering it practically useless for professional copyright enforcement. The physical evidence is most apparent when measuring the effective bandwidth of the source separation residuals. Human-composed music shows an average bandwidth of 1,996 Hz, while AI-generated music is concentrated at a much lower average of 291 Hz. Specifically, Suno v3.5 shows 170 Hz, Riffusion shows 219 Hz, and MusicGen shows 255 Hz.

From Pattern Recognition to Physical Forensics

This shift represents a fundamental change in how we identify synthetic media. Previous detectors focused on distribution learning, essentially teaching the AI what AI music sounds like. The flaw in this approach is fragility; as soon as a generative model is updated or a new architecture emerges, the style shifts, and the detector's performance collapses. ArtifactNet bypasses this by focusing on why AI music is physically different. By targeting the RVQ constraint, the framework remains effective regardless of the specific model architecture or the musical genre being produced.

By moving the goalposts from subjective style to objective physical evidence, the industry now has a data-dense foundation that can serve as legal evidence in copyright disputes or as a hard filter for content platforms. However, the system is not an invincible shield. It requires a 44.1kHz input to maintain peak accuracy. When processing low-bitrate MP3 files, the FPR climbs to 8%. There is also the threat of adversarial manipulation. In what is termed a laundry attack, users can employ Demucs to intentionally erase the residuals, which drops the True Positive Rate (TPR) to 94%. High-end models like Udio already present a challenge, with the TPR dipping to 87%.

Despite these vulnerabilities, the core realization is that the battle over AI authenticity has moved. It is no longer a debate about aesthetics or the soul of a composition, but a technical struggle over digital footprints.

The verification of music has officially transitioned from the realm of musicology to the realm of digital forensics.