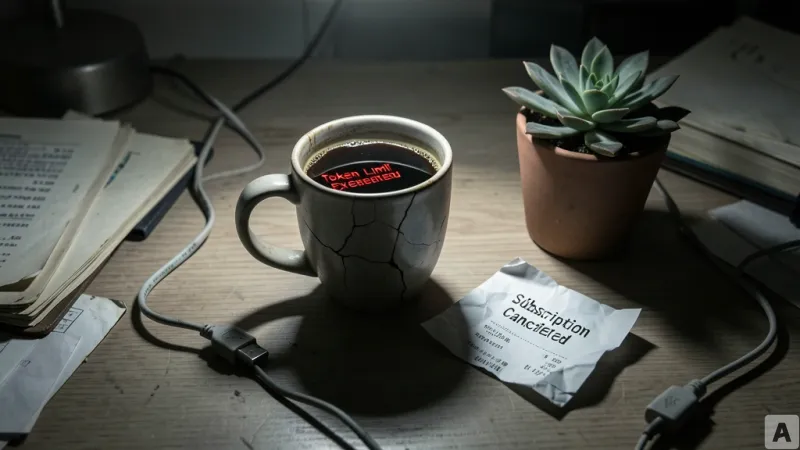

It is 10:00 AM, and a developer returns to their desk after a break, ready to dive back into the codebase. The goal is simple: ask Claude Haiku two basic questions that have nothing to do with the complex architecture of the current repository. But before the second answer can even finish streaming, a warning flashes across the screen. Token usage has hit 100 percent. In a matter of seconds, the entire resource allocation for the session has vanished, leaving the developer locked out of the tool they rely on to maintain their momentum.

The Operational Friction of the Pro Plan

This experience is not an isolated glitch but a symptom of a deeper operational breakdown within the Claude Code Pro plan. When the developer reached out to Anthropic's customer support to report the sudden depletion of tokens, the response was a masterclass in corporate friction. The initial interaction was handled by an AI support bot that failed to grasp the technical nuance of the problem. When a human agent finally took over, the resolution was equally hollow. Without verifying the user's specific plan or usage logs, the agent provided a copy-pasted manual response and abruptly closed the support ticket.

Beyond the support failure, the transparency of the service has become a primary point of contention. The developer noted that monthly usage limit warnings—which are not explicitly detailed in the official documentation—would appear sporadically and then vanish two hours later without explanation. This unpredictability is particularly jarring for a power user who manages a sophisticated AI pipeline. This developer does not rely on a single tool; their workflow integrates GitHub Copilot and OpenAI Codex, while using OMLX for local inference and the Continue plugin to run Qwen 3.5-9B. For someone accustomed to the stability of local models and established industry standards, the instability of Claude Code's resource management is an unacceptable variable in a professional production environment.

From High-Efficiency Tool to Lazy Assistant

When the subscription first began, Claude Code felt like a force multiplier. The speed was impressive, and the token allocation seemed fair relative to the output. However, a shift has occurred. The tool that once felt like a senior partner now behaves like a lazy assistant. The developer found that working on a single project for just two hours could now completely exhaust their token quota, a sharp contrast to the early days of the service.

This resource drain is compounded by a noticeable decline in the quality of the intelligence provided. In one instance, the developer tasked Claude Opus—the most powerful model in the family—with a project refactoring job. Instead of providing a structurally sound architectural improvement, the AI suggested a superficial workaround. To use a physical analogy, it was as if the developer asked for a leaking pipe to be repaired, and the AI suggested placing a bucket under the leak rather than fixing the pipe itself. While the AI eventually produced the correct code after being corrected, the process wasted half of a five-hour token allocation on a failure of basic reasoning.

Underpinning this inefficiency is a failure in cache management. In the context of AI coding, a cache acts as a short-term memory, allowing the model to remember the code it has already processed so it does not have to re-read the entire project every time a new question is asked. Previously, this memory was persistent and efficient. Now, the cache seems to expire prematurely. It is the equivalent of a chef who has memorized a recipe perfectly, but after a five-minute break, forgets everything and must start reading the cookbook from page one. This forces the user to pay a "token tax" for the same information repeatedly, destroying both the cost-efficiency and the speed of the workflow.

The core issue is not a lack of features, but a collapse of operational reliability. When a tool that is supposed to increase productivity by an order of magnitude begins to introduce unpredictability and waste, it ceases to be an asset and becomes a liability.

Technical brilliance cannot compensate for a lack of trust in the service layer.