For the modern AI engineer, the morning routine has become a ritual of scanning GitHub trending repositories and Hugging Face model cards to see which frontier has shifted overnight. This week, the conversation has centered entirely on the latest move from DeepSeek. After a period of relative silence following the December release of V3.2, the research lab has returned to the spotlight with the preview versions of the DeepSeek-V4 series. The developer community is now dissecting whether this release represents a mere incremental update or a fundamental disruption of the current LLM market, specifically regarding how extreme cost-efficiency can be weaponized in production environments.

The Architecture of DeepSeek-V4 Pro and Flash

The release introduces two distinct tiers designed for different operational scales: DeepSeek-V4-Pro and DeepSeek-V4-Flash. Both models are built on a Mixture of Experts (MoE) architecture, a design choice that allows the models to maintain massive knowledge bases without requiring every parameter to fire for every single token generated. This architectural efficiency is paired with a massive 1 million token context window, enabling the processing of entire codebases or lengthy legal documents in a single prompt.

The Pro model is a behemoth in the open-weight space, boasting a total of 1.6 trillion parameters. However, the MoE implementation ensures that only 49 billion parameters are active during any given inference step, balancing raw power with computational feasibility. On the other end of the spectrum, the Flash model is optimized for speed and lean deployment, utilizing 13 billion active parameters out of a total 28.4 billion.

DeepSeek has opted for the MIT license, providing a level of permissiveness that is highly attractive for commercial integration. The physical footprint of these models is substantial, with the Pro version requiring 865GB of storage and the Flash version requiring 160GB. Developers can access the model weights directly through the DeepSeek Hugging Face repository, sparking a wave of experimentation among those attempting to host these models on local infrastructure.

The Shift From Raw Power to Computational Efficiency

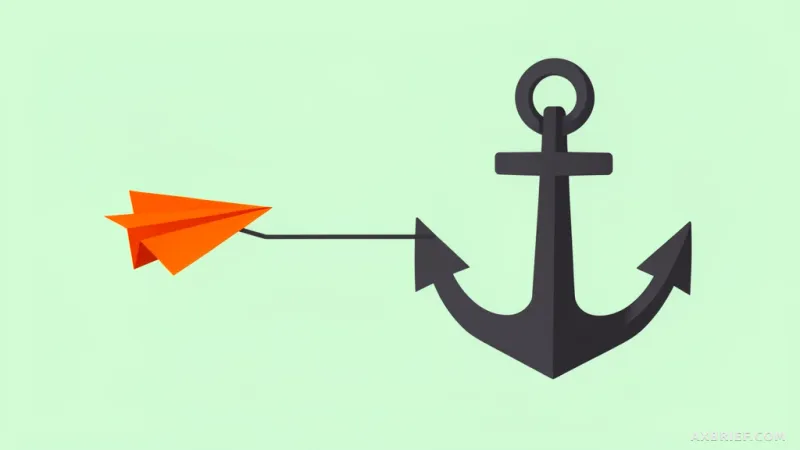

The industry has long accepted that higher intelligence requires a linear increase in compute and cost, but DeepSeek-V4 attempts to break this correlation. The core insight lies in the dramatic reduction of resource overhead compared to its predecessor. According to the technical documentation, when operating within a 1 million token context, DeepSeek-V4-Pro requires only 27% of the floating-point operations (FLOPs) and 10% of the KV cache occupancy compared to V3.2. The Flash model pushes this efficiency even further, utilizing only 10% of the FLOPs and 7% of the KV cache of the previous generation.

This technical optimization translates directly into a pricing strategy that puts immense pressure on the proprietary models from OpenAI, Google, and Anthropic. The DeepSeek official pricing page reveals a cost structure that is difficult to ignore. The Flash model is priced at 0.14 dollars per million input tokens and 0.28 dollars per million output tokens. Even the high-end Pro model remains remarkably affordable at 1.74 dollars per million input tokens and 3.48 dollars per million output tokens.

When analyzing performance, the Pro model demonstrates reasoning capabilities that sit on par with GPT-5.2 and Gemini-3.0-Pro. While there is a recognized technical gap of approximately three to six months when compared to the absolute bleeding edge of GPT-5.4 or Gemini-3.1-Pro, the trade-off is a matter of economics. With 1.6 trillion parameters, DeepSeek-V4 is now the largest open-weight model available, surpassing Kimi K2.6 with its 1.1 trillion parameters and GLM-5.1 with 754 billion parameters.

This creates a new tension for developers. The current trend is a shift toward local execution and quantization. Many users are currently testing the models via OpenRouter while awaiting quantized versions from the Unsloth team to make the 1.6 trillion parameter Pro model viable on consumer-grade or mid-tier enterprise hardware. The goal is no longer just to find the smartest model, but to find the most efficient way to stream a massive model into a local pipeline without sacrificing the reasoning capabilities of a frontier LLM.

Intelligence is no longer defined solely by the size of the parameter count, but by the ability to deliver that intelligence at the lowest possible cost per token.