The rush to integrate Large Language Models (LLMs) into cybersecurity pipelines has created a dangerous paradox: the tools designed to eliminate vulnerabilities are themselves introducing a systemic risk of complacency. As enterprises accelerate their software development lifecycles, the reliance on AI to automate security audits is growing at an unsustainable rate. The industry is currently treating AI as a definitive oracle rather than a probabilistic assistant, a mistake that creates a false sense of security while leaving the back door open for sophisticated attackers.

The Velocity Trap of AI-Driven Security

Modern software engineering is governed by the pressure of continuous integration and continuous deployment. In this environment, traditional security measures are often viewed as bottlenecks. For years, static analysis—the process of examining code without executing it—required teams of highly skilled security researchers to spend days or weeks manually auditing thousands of lines of code. This manual rigor was slow, expensive, and difficult to scale, leading many organizations to overlook critical vulnerabilities in the pursuit of faster release cycles.

The introduction of AI-powered security tools promised a revolution. By combining LLMs with static analysis, companies can now scan entire codebases for vulnerabilities in seconds. What once took a week of human effort now takes a few API calls. This efficiency has led many C-suite executives to believe that AI has effectively solved the problem of software vulnerability. The cost of auditing has plummeted, and the speed of detection has skyrocketed, but this efficiency comes with a hidden cost. The industry is trading the certainty of a human expert for the speed of a statistical prediction.

When a company adopts an AI security tool with 100 percent trust, it essentially installs a digital guard that operates on intuition rather than evidence. Much like a security robot that assumes a warehouse is empty because it is usually empty, AI tools often overlook anomalies that do not fit their training patterns. The danger is not that the AI is slow, but that it is fast and confidently wrong.

The Fundamental Flaw of Probabilistic Security

To understand why AI cannot be the final word in security, one must understand the difference between prediction and proof. LLMs are not logic engines; they are probabilistic machines. When an AI analyzes a block of code and declares it safe, it is not providing a mathematical proof of security. Instead, it is predicting that this specific pattern of code typically correlates with safe code based on the massive datasets it was trained on. It is guessing the most likely outcome.

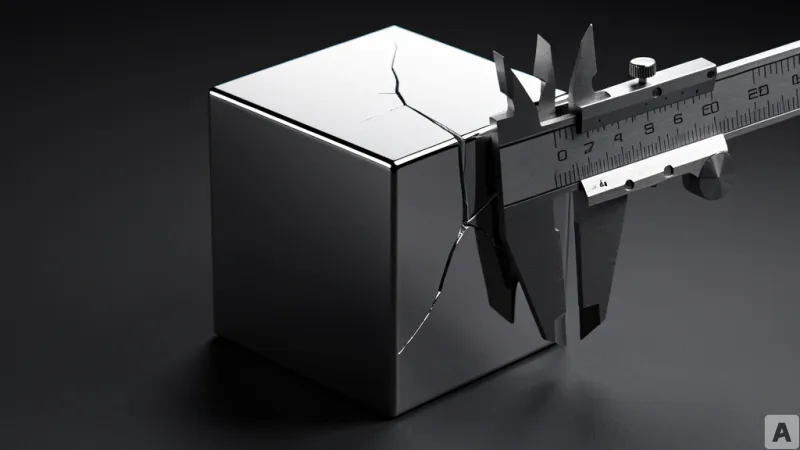

This is fundamentally different from the concept of Proof of Work or formal verification. True security requires a deterministic approach where every possible execution path is explored and verified to be free of flaws. AI skips this exhaustive process. It looks for patterns and provides a plausible answer. This is where the phenomenon of hallucination becomes a critical security risk. An AI can confidently assert that a piece of code is secure while missing a subtle buffer overflow or a logic flaw that a human expert would spot instantly.

Furthermore, the adversarial landscape is evolving. Threat actors are using the same LLMs to identify the blind spots of AI-driven security tools. Hackers are now designing exploits specifically to bypass the pattern-recognition capabilities of AI scanners. By crafting code that looks superficially safe to a probabilistic model but contains a hidden malicious payload, attackers can slide through AI-guarded perimeters undetected. In this arms race, the AI is not just the shield; it is also the map that attackers use to find the gaps in the defense.

The Pivot Toward Verification Infrastructure

Recognizing these limitations, the cybersecurity market is undergoing a strategic pivot. The initial hype cycle of simply adding AI to a product is ending, and a new era of verification-centric security is beginning. Investors and enterprises are realizing that the real value lies not in the tool that finds the bug, but in the tool that proves the bug is actually gone. There is a growing demand for a second line of defense—a verification layer that audits the AI's findings.

This shift is fundamentally altering the landscape of mergers and acquisitions in the tech sector. Companies that offer simple AI-powered scanning are seeing their valuations plateau, while firms specializing in formal verification and AI-checking technologies are becoming prime targets for acquisition. The competitive advantage has shifted from who can implement AI the fastest to who can doubt AI the most effectively. The goal is no longer just automation, but the creation of a trust-but-verify architecture where AI handles the initial heavy lifting and a deterministic system or a human expert provides the final validation.

As the industry matures, the role of the security professional is evolving from a manual auditor to a high-level orchestrator. The human is no longer required to find every single typo in the code, but they are more essential than ever to verify the AI's conclusions. The most secure organizations will be those that treat AI as a highly efficient intern—capable of processing vast amounts of data but incapable of signing off on the final security posture of the company.

Ultimately, AI is a powerful accelerant, but it is not a substitute for rigorous engineering. In the realm of cybersecurity, a probabilistic guess is a vulnerability in itself. The only way to maintain a truly secure perimeter is to ensure that the final seal of approval remains a human decision based on verifiable proof, not a statistical likelihood.