A developer tasked with auditing aerospace manufacturing documents recently hit a wall while searching for the maximum wall temperature of a nozzle throat. Despite a comprehensive text-based search across thousands of pages, the system returned zero results. The answer existed, but it was not written in a sentence; it was encoded within a color-coded thermal contour plot. This gap represents a critical failure point in modern retrieval-augmented generation (RAG) systems, where the inability to parse visual data renders a significant portion of technical knowledge invisible to the AI. As industries move toward more complex, image-heavy documentation, the reliance on text-only indexing has become a primary bottleneck for engineering teams.

The Architecture of Unified Vector Spaces

To bridge this gap, Amazon has introduced Amazon Nova Multimodal Embeddings, now available through the Amazon Bedrock platform. Unlike traditional embedding models that treat text and images as separate entities requiring different processing pipelines, this model projects text, images, and multi-page documents into a single, shared vector space. This unification allows a text query to be mathematically compared to an image without the need for an intermediate translation layer. The model provides flexibility in representation, offering embedding dimensions of 256, 384, 1024, and 3072. In recent performance evaluations focused on balancing retrieval quality with computational cost, the 1024-dimension configuration emerged as the optimal choice.

Beyond simple dimensionality, the model introduces specialized processing modes to handle the complexity of industrial documents. The `DOCUMENT_IMAGE` mode is specifically designed for pages containing mixed content, ensuring that the visual layout is preserved during the embedding process. To further optimize the retrieval cycle, Amazon implemented asymmetric embedding parameters: `GENERIC_INDEX` for the initial indexing of the knowledge base and `GENERIC_RETRIEVAL` for the actual user queries. This distinction allows the model to optimize for the different mathematical requirements of storing massive datasets versus searching through them. The resulting vectors are stored using Amazon S3 Vectors, leveraging S3's scalable storage to maintain high-performance vector indices.

Moving Beyond the OCR Bottleneck

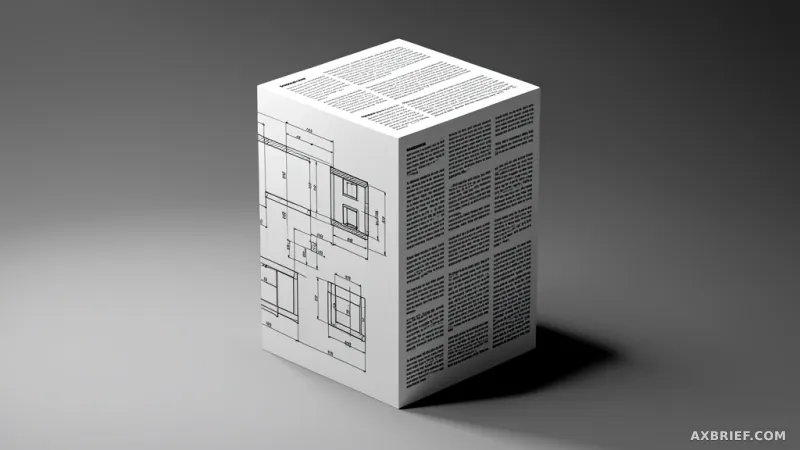

For years, the industry standard for searching images was to rely on Optical Character Recognition (OCR) to extract text, which was then passed to a standard text embedding model. This approach creates a fundamental loss of information because OCR flattens a two-dimensional visual environment into a one-dimensional string of characters. In a technical drawing of a turbo pump bearing, for instance, the critical information often resides in the spatial relationship between a labeled callout and a specific component. OCR might capture the label but will discard the spatial context, effectively stripping the data of its meaning. By bypassing OCR and embedding the image directly, Amazon Nova Multimodal Embeddings allow the model to understand the visual geometry and quantitative patterns of a drawing as a first-class citizen of the vector space.

This shift in approach was validated using a specialized aerospace manufacturing dataset consisting of 15 technical images and 5 multi-page PDFs. The dataset was intentionally rigorous, featuring CAD drawings, welding inspection reports, Inconel 718 fatigue S-N curves, and thermal degradation reports. The core challenge lay in data that is inherently non-textual, such as torque specification tables drawn directly into blueprints or color-coded heat maps showing peak temperatures in rocket engine nozzles. Even the decision diamonds and color-coded gates within process flowcharts, along with their associated cycle time annotations, were included in the vectorization process to test the model's limits.

To measure the actual impact, the development team compared two distinct pipelines. The first was a text-only pipeline that used Amazon Nova 2 Lite to perform OCR before embedding the resulting text. The second was the multimodal pipeline, which embedded the images directly. Both pipelines stored their data in Amazon S3 Vectors and were tested against 26 complex manufacturing queries. The performance was measured using Recall@K, Mean Reciprocal Rank (MRR), and Normalized Discounted Cumulative Gain (NDCG@K). For the final answer generation, Amazon Nova 2 Lite was used as the LLM to synthesize the retrieved information, with an LLM-based judge scoring the accuracy of the final responses. The results demonstrated that direct visual embedding significantly outperforms the OCR-to-text pipeline by preserving the quantitative and spatial nuances that text extraction inevitably destroys.

The competitive edge in AI-powered search has shifted from the ability to extract text to the ability to mathematically represent visual context.