Enterprise chatbots are currently hitting a wall. While large language models excel at processing general information, they often struggle to maintain the specific, evolving context required for corporate environments. When a customer asks a question, they expect the system to remember past interactions, internal organizational hierarchies, and specific project constraints. TrendMicro recently addressed this by building a sophisticated memory architecture for their internal tool, Trend’s Companion, moving beyond simple stateless interactions toward a system that treats organizational knowledge as a persistent, queryable asset.

The Architecture of Enterprise Memory

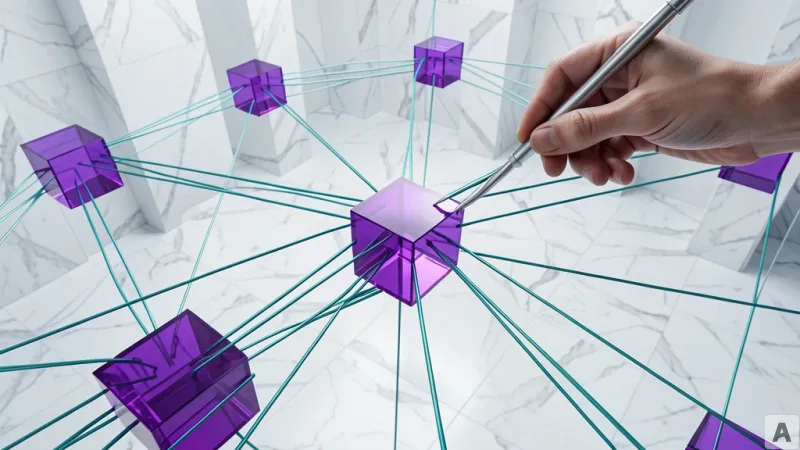

The core of the TrendMicro implementation relies on a multi-layered stack designed to bridge the gap between unstructured conversation and structured corporate data. At the center is Amazon Bedrock, which orchestrates the workflow, utilizing Anthropic’s Claude to extract entities and relationships from raw user messages. To manage this data, the team integrated Mem0, a memory management layer that provides AI models with long-term recall capabilities. The extracted entities are transformed into vectors using Amazon Bedrock Titan Text Embed and stored within two distinct systems: Amazon OpenSearch Service for semantic search and Amazon Neptune for graph-based relationship mapping. This dual-storage approach allows the system to handle both the nuance of natural language and the rigid requirements of organizational data structures.

Bridging Vector Search and Knowledge Graphs

Traditional RAG (Retrieval-Augmented Generation) pipelines often rely solely on vector similarity, which can lead to superficial or disconnected answers when complex relationships are involved. The TrendMicro approach shifts this paradigm by combining semantic search with graph traversal. When a query enters the system, the architecture performs a parallel lookup. It uses OpenSearch to find relevant semantic context while simultaneously querying Neptune to map precise entity relationships. These results are then passed through Amazon Bedrock Rerank, which evaluates the relevance of the retrieved data before injecting it into the LLM’s context window. By grounding the model in a structured knowledge graph, the system can distinguish between ambiguous entities—such as identifying specific historical or organizational figures—that a standard vector search might conflate. Developers looking to implement similar architectures can find foundational patterns in the AWS Samples GitHub repository and consult the Amazon Neptune documentation for deep-dive configuration details.

Human-in-the-Loop Validation

To ensure the reliability of this memory, the system incorporates a mandatory verification layer. Every time the AI generates a response, it produces a mapping report that explicitly identifies which memory segments were referenced to construct the answer. This creates a human-in-the-loop feedback mechanism where authorized users must approve or reject the AI’s memory usage. Only verified information is committed to the permanent knowledge base, while rejected data is purged immediately. This process serves as a critical guardrail against AI hallucinations, allowing the organization to curate its own knowledge base iteratively while maintaining strict control over the information the model considers "truth."

The future of enterprise AI lies in the ability to curate and verify institutional context rather than simply scaling model parameters.