The developer community is currently recalibrating its expectations for AI agents, moving away from simple chat interfaces toward systems that can actively secure their own infrastructure. This shift was on full display during the Gemini 3 Seoul Hackathon, held on February 28, where a project demonstrating autonomous, self-evolving security architecture captured second place. Amidst a sea of 111 projects from 219 participants, the winning entries signaled a transition from passive AI assistance to active, agentic problem-solving.

1,515 Applicants and the Reality of the Production Sprint

The Gemini 3 Seoul Hackathon was a high-stakes environment, filtering 1,515 initial applicants down to 219 developers tasked with a singular goal: The Production Sprint. Participants were required to build applications that were not just theoretical, but immediately deployable within the Google Cloud Platform (GCP) ecosystem. The second-place winner, Yong-gyu Kim, an AI solutions engineer at GS Neotek, stood out by operating as a solo team. Kim managed the entire stack, from architectural design to infrastructure deployment, within the event's tight time constraints. His success highlighted a growing trend where individual engineers, empowered by advanced LLMs, can now execute complex system designs that previously required entire security operations teams.

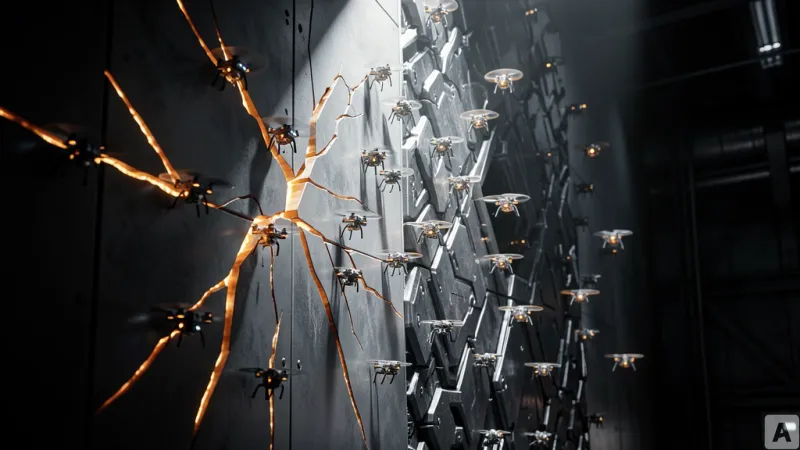

The Infinite Loop of Adversarial and Defensive Agents

Traditional security relies on human-led vulnerability analysis and manual patching, a process that is inherently reactive and slow. The system developed by Kim flips this model by creating an internal feedback loop between two distinct agents: an Adversarial Agent that generates attack scenarios and a Defense Agent that analyzes those scenarios to formulate and deploy countermeasures. By leveraging the long context window and multi-step reasoning capabilities of Gemini 3, the system continuously refines its own security policies. This is not a simple API-driven chatbot; it is a recursive loop where the AI evaluates its own logic, identifies weaknesses, and iterates on its defense strategy. This architecture demonstrates how modern models can handle complex, chained logic that would cause standard LLM implementations to hallucinate or stall.

Orchestrating Agentic Workflows with AI Studio and Antigravity

The technical execution of this project relied on a specialized toolchain designed for rapid iteration. During the planning phase, Kim utilized Google AI Studio to test inference logic and refine the adversarial scenarios. For the implementation, he integrated Antigravity, a framework designed to manage complex agent-to-agent workflows. The primary challenge in building such a system is preventing the agents from entering an infinite, unproductive loop. Kim solved this by strictly defining the output of each stage as the input for the next, creating a stable, linear pipeline that allows for continuous improvement without compromising system integrity. His approach emphasized the importance of building a Minimum Viable Product (MVP) that focuses on core functionality before scaling, a strategy that proved critical in the high-pressure environment of the hackathon.

In an era where AI capabilities are evolving weekly, the ability to rapidly prototype and test autonomous workflows has become the primary differentiator for engineering teams.