A support agent on your team answers “When should I get a flu shot?” and the user immediately doubts the reply when the tone or schedule feels American, or when the assistant slips into informal speech for a 60-year-old.

That exact trust gap is what NVIDIA’s Nemotron-Personas-Korea is trying to close: not by translating better, but by giving an agent a Korean identity layer that carries region, occupation, and communication norms into the system prompt.

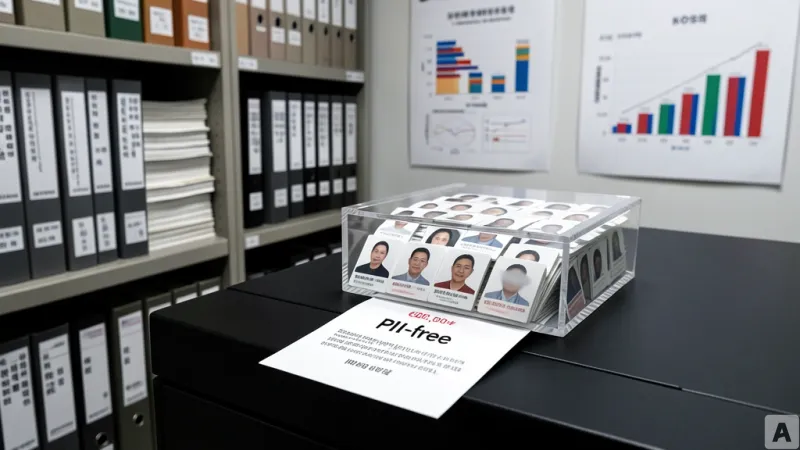

Nemotron-Personas-Korea provides 6 million fully synthetic personas

NVIDIA’s Nemotron-Personas-Korea dataset offers 6 million (600만) fully synthetic personas. The personas are generated using seed data sourced from KOSIS (Korean Statistical Information Service, 한국통계정보서비스), and they are grounded in official Korean statistics and data from these institutions: Supreme Court of Korea(대법원), National Health Insurance Service(국민건강보험공단), and Korea Rural Economic Institute(한국농촌경제연구원).

NVIDIA also states that NAVER Cloud(네이버 클라우드) contributes seed data and domain expertise during the design stage.

Each persona is described as demographically accurate, but with personally identifiable information (PII) set to 0. The dataset is also designed with Korea’s Personal Information Protection Act (PIPA, Personal Information Protection Act) in mind.

The release frames Korea as one of the few countries that has published official guidance for synthetic data generation. In that context, it says the dataset follows an approach that establishes governance when grounding models with synthetic versions of sensitive data.

So what does that mean in practice? It means you can build an agent that “speaks like it belongs” without exposing real people.

The old default was identity-blind agents; now a persona layer gets inherited

The article contrasts Nemotron-Personas-Korea with a common pattern in earlier agent systems: identity-blind behavior. In those setups, the agent can follow instructions, but the prompt does not lock in who the assistant is “talking as.”

The piece gives concrete examples of why that matters. If a Korean hospital appointment scenario gets handled using American scheduling conventions, or if the assistant mixes informal speech with a 60-year-old patient, the user stops evaluating the answer on accuracy and starts questioning the assistant’s understanding of the person in front of it.

That shift from “is it correct?” to “is it appropriate for me?” is presented as a trust failure, not a cosmetic issue.

What changes here is the mechanism: the persona is loaded into the system prompt, and the agent then inherits the persona’s region(지역), occupation(직업), communication norms(대화 관습), and domain expertise(도메인 전문성). The article emphasizes that this persona layer is not tied to a specific agent framework. Instead, it works through a well-structured system prompt while remaining grounded in Korean population distributions.

The release also lays out the generation pipeline in more detail than a typical dataset announcement.

For synthetic data generation, Nemotron-Personas-Korea uses NVIDIA’s NeMo Data Designer (an open-source system for synthetic data generation). For statistical grounding, it uses Probabilistic Graphical Model (확률 그래프 모델, Apache-2.0 라이선스).

For Korean narrative generation, the pipeline uses Gemma-4-31B, described as a 31B-parameter model from Google’s Gemma family. For population data, it references KOSIS (2020–2026 releases), and for name distributions it references data from the Supreme Court.

So what’s actually different? The dataset isn’t just “more Korean text”; it’s a structured persona substrate that the agent can inherit at runtime.

Persona filtering to inference wiring in about 20 minutes

The tutorial portion of the article frames the deployment path as fast: converting synthetic personas into a deployed Korean agent takes “about 20 minutes.” It starts with dataset loading and exploration, then moves into how records are used.

The key idea is that each record contains both structured demographic fields and rich natural-language persona narratives. The structured fields include things like age, region, and occupation, while the narratives provide the conversational and behavioral context.

Filtering is performed using fields such as occupation, region, and age, and the article says combinations are possible. It gives an explicit example: building a public health agent for Korea.

It then shows how you can narrow further by selecting slices such as “only 제주-based health workers,” and it adds additional dimensions like education level(학력) and life stage(생애 단계). The claim is that with enough dataset scale, you can find highly specific slices.

At that point, the tutorial explains how the agent identity is assembled. It says the structured fields—name, region, occupation, skills—are fed into the agent as identity inputs. On top of that, it overlays behavioral instructions and task scope(업무 범위), producing an agent that reasons “like a Korean expert for a specific role and region.”

Inference connection is described as having three options, depending on the setup. The article also states that domain switching uses the same approach: you change the persona filter and task scope, and the agent’s behavior shifts accordingly.

To make that concrete, it provides examples of domain swaps across different persona types. A 금융(금융, geum-yung) persona becomes a retail banking advisory agent. An 교육(교육, gyoyug) persona becomes a tutoring assistant. A 공무원(공무원, gongmuwon) persona becomes a government public health services agent.

The tutorial includes an example tied directly to the earlier trust problem: answering “When should I get a flu shot?” with and without persona grounding. The article argues that persona grounding goes beyond translation by providing context that users can trust.

Finally, it describes practical deployment routes.

One path connects a persona-grounded prompt to an agent framework and configures an always-on agent using NemoClaw. The article describes NemoClaw as an NVIDIA open-source reference stack for always-on agents, running in the NVIDIA OpenShell sandbox and supporting everything from RTX PC setups to DGX Spark.

It also says you can serve production inference using NVIDIA NIM or by calling NVIDIA APIs directly.

Nemotron-Personas-Korea is positioned as the latest addition to the Nemotron-Personas Collection, and the article notes that the same pipeline can handle other countries and regions—USA, Japan, India, Singapore (AI Singapore), Brazil (WideLabs), and France (Pleias). The implication is that for multi-country services, you can mix personas rather than rebuilding the system from scratch.

The piece closes with an offline developer opportunity. NVIDIA Nemotron Developer Days will be held in Seoul on April 21–22, 2026. It says the event includes sessions on sovereign AI and open models, plus a hands-on hackathon where participants build domain-specific Korean agents using Nemotron-Personas-Korea. It also notes that people can attend in person or via livestream, and that build outcomes may be featured in future tutorials.

The core of the dataset, as presented here, is that it plugs directly into code by injecting Korean demographic-grounded synthetic personas into the system prompt, aligning the agent’s tone, context, and expertise at the same time.

That’s the shift: Korean agent quality stops being a prompt-writing exercise and becomes a persona-grounded pipeline you can deploy quickly.