A shop manager at a small-scale CNC machining facility begins their week the same way every Monday: staring at a stack of technical drawings. For every single quote request, they must manually print blueprints, verify dimensions with calipers, and cross-reference tool availability. This ritual consumes between 30 and 60 minutes per drawing, effectively stealing 5 to 20 hours of high-value engineering time every week. In an industry where precision is measured in microns, the administrative overhead of determining whether a part can even be manufactured has become a critical bottleneck. This friction is not a failure of skill, but a failure of tooling, as the bridge between a CAD file and a production report remains stubbornly manual.

The Architecture of MachinaCheck

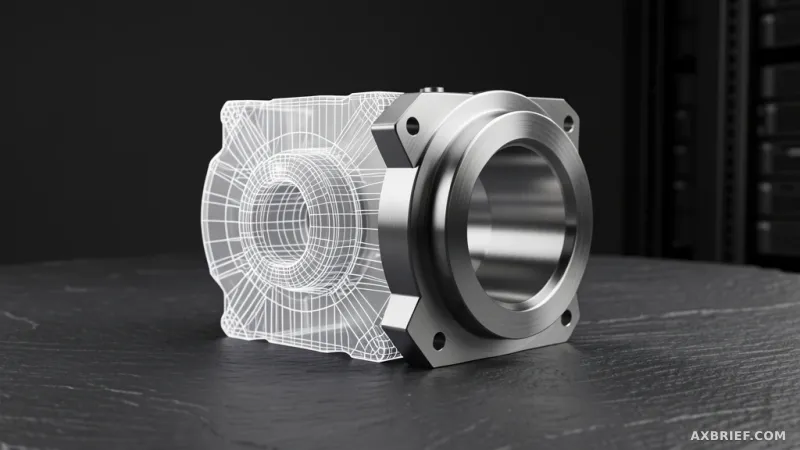

To eliminate this inefficiency, MachinaCheck emerges as a multi-agent AI system designed specifically for manufacturability analysis. The workflow transforms a grueling manual process into a 30-second automated sequence. A user uploads a STEP file—the industry standard for CAD data—and inputs specific parameters including material types, tolerances, and thread specifications. The system then generates a comprehensive manufacturability report, automating everything from drawing interpretation to tool availability checks. This removes the risk of human error during the quoting phase, ensuring that the transition from design to machine is seamless.

The physical foundation of this system is the AMD Instinct MI300X, a data center GPU that provides a massive 192GB of HBM3 high-bandwidth memory. This memory capacity is the enabling factor for the entire operation, allowing the system to host large language models locally without relying on external cloud servers. With a memory bandwidth of 5.3 TB/s, the hardware ensures that the model can process complex manufacturing queries with minimal latency. Specifically, MachinaCheck utilizes Qwen 2.5 7B Instruct, an open-source model developed by Alibaba, running entirely within the factory's own closed network. This setup functions as a digital security vault, ensuring that sensitive operational data never leaves the premises.

The Deterministic Twist in AI Reasoning

While many enterprises attempt to solve manufacturing problems by feeding images or PDFs into a vision-capable LLM, MachinaCheck adopts a fundamentally different strategy to solve the problem of AI hallucinations. The system recognizes that in CNC machining, a 0.1mm error is not a minor glitch—it is a scrapped part. To achieve 100% accuracy, the developers decoupled mathematical extraction from linguistic reasoning.

Instead of asking an LLM to look at a drawing and guess the dimensions, the system uses cadquery, a powerful library for programmatic CAD model manipulation. CadQuery extracts the exact mathematical geometry directly from the STEP file. This process is entirely deterministic; it does not guess, it calculates. Similarly, the verification of tool availability is handled through pure Python logic and direct database queries rather than LLM inference. The LLM is relegated to a specific, high-value role: synthesizing the deterministic data into a professional, domain-aware report and providing expert-level judgment based on manufacturing knowledge.

This hybrid approach—combining deterministic geometry extraction with probabilistic linguistic synthesis—ensures that the final output is grounded in physical reality. The system is built upon LangChain for agent orchestration and FastAPI for the web interface, creating a robust pipeline where the AI acts as the editor rather than the calculator. This architectural choice solves the primary trust issue in industrial AI: the fear that a model might confidently invent a dimension that does not exist.

To deploy this environment, the system utilizes vLLM, a high-throughput serving engine, optimized for AMD's ROCm platform. The deployment configuration is straightforward, allowing the model to run with significant overhead for future scaling.

bash

vLLM inference environment setup example

python -m vllm.entrypoints.openai.api_server --model Qwen/Qwen2.5-7B-Instruct --gpu-memory-utilization 0.5

In the current configuration, the system utilizes approximately 96GB of the available 192GB VRAM, leaving ample room for context expansion or the integration of larger models. Inference latency remains consistently under 3 seconds, providing a near-instantaneous response for the end user. Because the hardware headroom is so significant, the system can be upgraded to the Qwen 2.5 72B model without requiring any new physical infrastructure, providing a clear path for increasing the sophistication of the AI's reasoning capabilities as the factory's needs evolve.

This shift toward on-premise, high-performance computing marks a turning point for industrial digital transformation, where data sovereignty and deterministic accuracy take precedence over the convenience of the cloud.