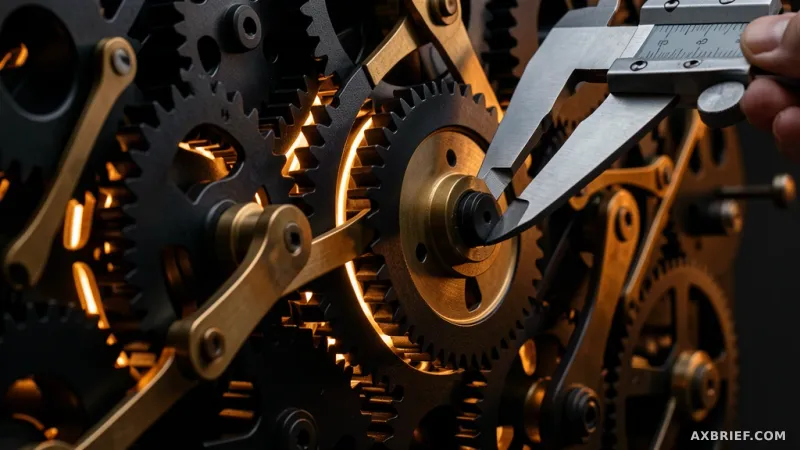

For decades, the final word in industrial engineering has been the physical prototype. Whether deploying a fleet of robotic arms or designing a new assembly line, engineers lived in a state of high-stakes tension, knowing that the only true validation happened on the factory floor. A single millimeter of misalignment or a slight timing error in a robot's path could lead to catastrophic collisions, costly downtime, and weeks of redesign. This reliance on physical testing created a bottleneck where innovation was limited by the speed of hardware installation.

The OpenUSD Standard and the End of Data Fragmentation

The primary obstacle to a simulation-first approach has not been a lack of computing power, but a fundamental crisis of data interoperability. Manufacturing relies on a fragmented ecosystem of Computer-Aided Design (CAD) tools, each with its own proprietary format. When an engineer moved a 3D asset from a design tool to a simulation platform, critical metadata, geometric precision, and physical properties were often lost in translation. This forced teams to rebuild assets from scratch for every new stage of the pipeline, introducing errors and wasting thousands of man-hours.

To break this cycle, the industry is pivoting toward OpenUSD, an open data standard for 3D scenes that allows different tools to collaborate on a single source of truth. Building upon this foundation, SimReady introduces a standardized framework for 3D assets to ensure they possess the physical accuracy required for high-fidelity simulation. SimReady defines the physical attributes that allow an object to behave realistically across rendering, simulation, and AI training pipelines. This ecosystem is anchored by NVIDIA Omniverse, a platform that provides the virtual space where these assets can be deployed and tested. By utilizing these tools, companies can generate high-fidelity synthetic data that is accurate enough to train AI models before a single piece of hardware is ever bolted to the floor.

From Static Models to Physical AI and Real-Time Intelligence

The shift from traditional CAD to an Omniverse-driven workflow changes the fundamental economics of manufacturing. The difference is no longer just about seeing a 3D model; it is about running the exact same firmware in a virtual environment that will eventually run on the physical robot. ABB Robotics has operationalized this by integrating NVIDIA Omniverse libraries into RobotStudio HyperReality. This integration allows over 60,000 engineers to train robots and test part tolerances in a virtual mirror of the production line. The impact is quantifiable: product introduction cycles have dropped by up to 50%, commissioning time has been slashed by up to 80%, and total equipment lifecycle costs have decreased by 30 to 40%.

This acceleration extends beyond robotics into the very physics of product design. JLR utilizes the Neural Concept Design Lab to overhaul its aerodynamic simulations. In a traditional workflow, validating a design could take four hours of compute time; now, that process is compressed into a single minute. With 95% of these aerodynamic workloads running on NVIDIA GPUs, the iteration loop has moved from a daily cadence to a near-instantaneous one.

However, the most significant evolution occurs when simulation meets real-time operational data. Once a factory is live, the challenge shifts from prediction to optimization. Tulip Interface addresses this by combining factory camera feeds, sensor data, and operational context through the NVIDIA Metropolis VSS Blueprint. By integrating NVIDIA Cosmos, a vision-language model capable of interpreting real-time video and behavior, the system transforms raw footage into structured intelligence. For Terex, this convergence of physical AI and real-time analysis resulted in a 3% increase in production yield and a 10% reduction in rework rates.

This trajectory suggests a future where the boundary between the digital twin and the physical asset disappears entirely, turning the factory into a living, self-optimizing organism.