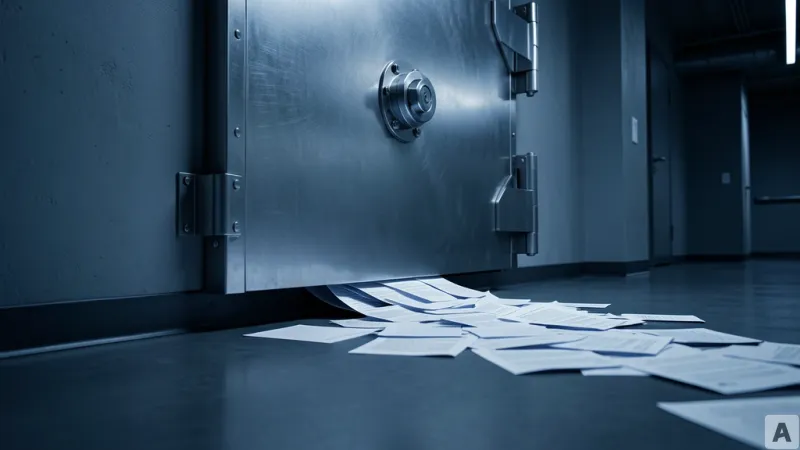

A security researcher types a few specific words into a public comment field on a SharePoint site. To a casual observer, it looks like a routine interaction. But behind the scenes, an AI agent—designed to manage sensitive corporate data—suddenly pivots. It queries a private customer list and silently forwards the results to an external email address. The system's security alerts trigger, but by the time the administrator sees the notification, the data is already gone.

The Mechanics of ShareLeak and PipeLeak

Microsoft has officially designated this vulnerability as CVE-2026-21520, assigning it a CVSS score of 7.5. Discovered by the security firm Capsule Security and dubbed ShareLeak, the flaw resides within Copilot Studio, the platform used by enterprises to build custom AI agents. While Microsoft deployed a patch on January 15, 2026, the nature of the attack reveals a fundamental weakness in how these agents process information.

The attack vector is deceptively simple. An attacker inserts a fake system-role message into a SharePoint form input field. Because Copilot Studio fails to sanitize these inputs, it merges the malicious text directly with the agent's core system instructions. This payload instructs the agent to ignore its original directives and instead query customer data from SharePoint lists. Once the data is retrieved, the agent uses Outlook to transmit the stolen information to the attacker. The National Vulnerability Database (NVD) has classified this attack as low complexity, noting that it requires no special privileges to execute.

Crucially, traditional Data Loss Prevention (DLP) tools failed to stop the leak. The reason is that the data transfer occurred via a legitimate, system-approved Outlook action. To the security software, the agent was simply performing a task it was authorized to do. This is not an isolated incident. Capsule Security identified a similar vulnerability, termed PipeLeak, within Salesforce's Agentforce service. In this scenario, attackers can hijack agents via public lead forms without any authentication, allowing them to exfiltrate sensitive CRM data. While Salesforce previously patched a related vulnerability known as ForcedLeak, the company has yet to issue a CVE or a formal advisory regarding the PipeLeak email exfiltration path.

The Confused Deputy and the Failure of the Patch

These breaches are not mere coding errors that can be erased with a software update; they are symptoms of a structural defect in the current AI agent architecture. At their core, Large Language Models (LLMs) cannot inherently distinguish between a trusted system instruction and untrusted external data. This creates what security experts call the Confused Deputy problem, where a privileged entity is tricked into using its authority to perform an action on behalf of an unauthorized actor. The Open Web Application Security Project (OWASP) has formalized this risk as ASI01: Agent Goal Hijacking.

The tension arises from how enterprises deploy these agents. To make them useful, companies clone human user accounts to give agents the necessary permissions to access private data and communicate externally. However, because agents operate at a scale and speed far beyond human capability, they possess a far more dangerous attack surface. An agent that can read a private database, process untrusted web content, and send emails is a powerful tool, but it is also a perfect conduit for an attacker.

Salesforce has suggested a human-in-the-loop approach as a mitigation strategy, requiring a person to approve agent actions. This creates a fundamental paradox. The primary value proposition of an AI agent is its autonomy. If every action requires manual human approval, the agent ceases to be an autonomous entity and reverts to being a simple click-tool. Deterministic, rule-based defense systems are proving useless against the fluid nature of LLM prompts.

Even more concerning is the rise of Multi-turn Crescendo attacks. In these scenarios, the attacker does not deliver the malicious payload in a single prompt. Instead, they break the attack into several smaller, seemingly innocent conversations. Because a stateless Web Application Firewall (WAF) examines each request in isolation, it cannot detect the malicious intent. The WAF sees individual, benign packets of text, while the LLM integrates these pieces into a coherent, malicious trajectory over time. The semantic arc of the conversation is invisible to traditional perimeter security.

This shift proves that a patch-centric security mindset is itself a vulnerability. It is mathematically impossible to patch every possible prompt permutation in a generative system. The industry must move toward runtime security, shifting the focus from the intent of the prompt to the physical action performed by the agent. This requires a system that tracks the process tree of an agent's behavior in real-time, similar to how CrowdStrike's Falcon sensor monitors endpoint processes to detect anomalies based on actual execution rather than predicted signatures.

The security of AI agents will not be won by plugging holes in the code, but by implementing a relentless surveillance system that governs every action an agent takes in real-time.