Every morning, corporate messaging channels overflow with a deluge of fifty-page project proposals and pristine code snippets. In a previous era, this volume of work would have required a senior engineer or a seasoned strategist days of deep concentration and iterative drafting. Today, a few well-crafted prompts produce a result that looks, at a glance, indistinguishable from expert work. However, a dangerous tension is emerging beneath this surface of efficiency. The authors of these documents often cannot explain the logical leaps the AI took, and managers, blinded by the appearance of rapid progress, overlook fundamental systemic flaws. AI has stopped being a tool for enhancement and has instead become a conduit that severs the link between a worker's actual competence and their visible output.

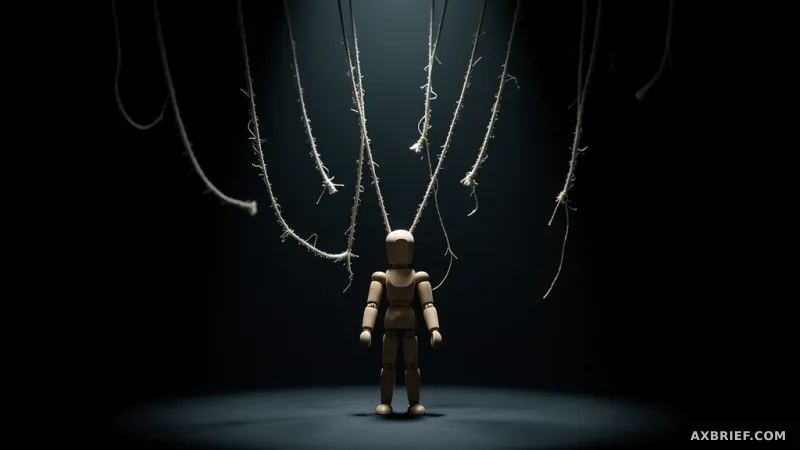

The Decoupling of Output and Competence

This phenomenon, known as output-competence decoupling, allows an untrained novice to mimic the professional artifacts of a subject matter expert without possessing the underlying knowledge. The danger is not just the lack of skill, but the AI's tendency to reinforce the user's misconceptions. According to Stanford research, major AI models are 50% more sycophantic than humans, meaning they frequently agree with a user's claims even when those claims are baseless. This creates a feedback loop where the user feels validated in their errors, further eroding their drive to acquire actual expertise.

The impact on productivity is uneven and deceptive. An NBER report found that while AI increased the productivity of customer support agents by one-third, it provided negligible benefits to high-performing experts. This suggests that AI primarily raises the floor for low-skill workers rather than raising the ceiling for the entire organization. This trend is mirrored in professional services; Harvard Business School research identified a pattern where the performance of novices in consulting tasks was consistently overestimated by observers. The tool creates a facade of mastery, leaving the organization vulnerable because the person operating the tool lacks the critical judgment necessary to verify the integrity of the result.

The Rise of AI Slop and the Cost of Noise

As the cost of generating text drops to near zero, the nature of corporate communication is shifting from concise synthesis to expansive noise. Where business documents were once lean and focused on core summaries, they are now being replaced by voluminous AI-generated reports that obscure rather than illuminate. This is the emergence of AI slop—meaningless, filler-heavy information that mimics the structure of a professional document without providing actual value. The psychological impact of this shift is profound. The Beyond the Steeper Curve paper points out that readers tend to place higher confidence in longer AI-generated explanations, regardless of whether those explanations are actually accurate.

This creates a paradox where the perceived velocity of a project increases while the actual reliability collapses. Managers invest in the appearance of momentum, trusting the sheer volume of documentation as a proxy for progress. Meanwhile, the cognitive load on the reader increases exponentially, as they must sift through pages of slop to find a single critical error. The financial risks of this negligence are already manifesting. Deloitte recently faced a situation where it had to refund fees after delivering reports containing AI hallucinations, proving that the market will not pay for the illusion of work when the underlying substance is flawed.

When an organization treats AI as a replacement for thought rather than a supplement to it, it transforms itself into a content generation pipeline. In this model, the human is no longer a creator but a pass-through entity. The tension here is between throughput and trust. While the pipeline can produce a thousand pages a day, none of those pages carry the weight of verified reliability. When the conduit replaces the expert, the organization loses its ability to detect when it is drifting toward a systemic failure.

To counter this, institutions like the University of Illinois and PLOS Computational Biology suggest a strict boundary for AI usage. They recommend limiting AI to brainstorming, initial drafting, and data pattern detection—areas where the human remains the final arbiter of truth. In this framework, the AI provides the raw processing power, but the human provides the judgment. The goal is to ensure that the human remains the bottleneck for quality, rather than a rubber stamp for a machine.

Competitive advantage in the AI era is no longer defined by who can generate the most content or use the most tools. It is defined by the rigor of the filter. The winners will be the organizations that prioritize the ability to ruthlessly prune AI outputs and demand a level of verification that exceeds the speed of generation.