The tech world has spent the last year locked in a cycle of volatility surrounding Sam Altman. From the sudden board coup and subsequent reinstatement to the ongoing debates over OpenAI's shift from a non-profit ideal to a commercial powerhouse, the narrative is often shaped by a small circle of influential insiders. When figures like Paul Graham, the legendary co-founder of Y Combinator, step forward to vouch for Altman's character or leadership, the industry generally listens. These endorsements are treated as objective testimonials from a mentor who knows the man behind the machine. However, a closer look at the cap table suggests that these testimonials are not merely personal reflections, but are tied to one of the most successful financial bets in the history of venture capital.

The Financial Architecture of a Giant

The relationship between Y Combinator and OpenAI is not merely one of mentorship, but of foundational investment. OpenAI began its journey in 2016, receiving early support through YC Research, an arm of the Y Combinator accelerator. At the time, Sam Altman was serving as the president of Y Combinator, creating a tight-knit loop between the accelerator's resources and the fledgling AI lab's ambitions. As OpenAI evolved from a research project into the dominant force in generative AI, its valuation entered a stratosphere previously reserved for the largest public companies in the world.

Current industry data places OpenAI's valuation at approximately 852 billion dollars. Within this massive valuation, Y Combinator maintains a stake of roughly 0.6 percent. While a fraction of a percent may seem negligible in a typical startup context, the scale of OpenAI transforms this sliver of equity into a staggering asset. When applied to the current valuation, Y Combinator's 0.6 percent stake is worth more than 5 billion dollars. This is not just a successful exit; it is a windfall that fundamentally alters the economic standing of the organization and its primary decision-makers.

The Conflict of Interest in AI Governance

The tension arises when this financial reality intersects with public discourse. Paul Graham and his wife, Jessica Livingston, remain central figures in Y Combinator's legacy and decision-making framework. When these individuals offer public defenses of Sam Altman's credibility or the ethical direction of OpenAI, they are not speaking as disinterested observers. They are speaking as stakeholders in a 5 billion dollar position. The distinction is critical because the perceived stability and leadership of Sam Altman are directly correlated with the valuation of OpenAI. Any significant blow to Altman's reputation or a collapse in leadership confidence could, in theory, impact the value of that 0.6 percent stake.

Historically, the early equity structures of startups were treated as private matters between founders and investors. However, OpenAI is no longer a typical startup; it is a systemic utility that influences how millions of developers write code and how businesses operate. When the press quotes Y Combinator leadership as authoritative sources on Altman's reliability without disclosing the 5 billion dollar incentive, it creates an information gap. The reader is led to believe they are receiving an unbiased character reference, when they are actually hearing from a party with a massive financial interest in the subject's continued success.

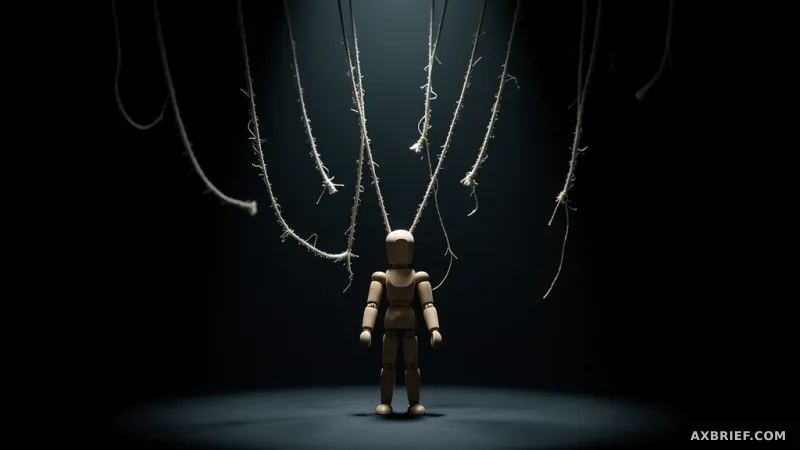

This shift in perspective transforms the conversation from one of personality to one of governance. For the developers and engineers integrating these models into their production pipelines, this lack of transparency represents a practical risk. The decision to build a company's core infrastructure on a specific AI model is no longer just a technical choice based on tokens per second or benchmark scores. It is a bet on the governance and stability of the organization providing the API. If the leadership is shielded from scrutiny by a network of financially incentivized allies, the risk of sudden, erratic pivots in corporate direction increases.

In an era where capital often dictates the narrative of technical progress, the demand for transparency in AI governance is becoming as important as the models themselves. Developers must now evaluate the environment in which their tools are produced with the same rigor they apply to the code. When the logic of capital outweighs the logic of transparency, the reliability of the technology itself comes into question.

The intersection of massive wealth and institutional influence means that the trust we place in AI is now inextricably linked to the transparency of the balance sheets behind it.