The current era of generative AI is defined by the spectacle of the flat screen. Users prompt a model, and seconds later, a hyper-realistic video unfolds, mimicking the laws of physics with startling accuracy. Yet, these outputs remain digital ghosts. No matter how convincing a generated forest or futuristic city looks, it is merely a sophisticated sequence of pixels. The viewer is a passive observer, locked out of the scene, unable to move a single stone or change the angle of a camera once the render is complete. The industry has mastered the art of the movie, but it has yet to master the art of the space.

The Four-Stage Architecture of HY-World 2.0

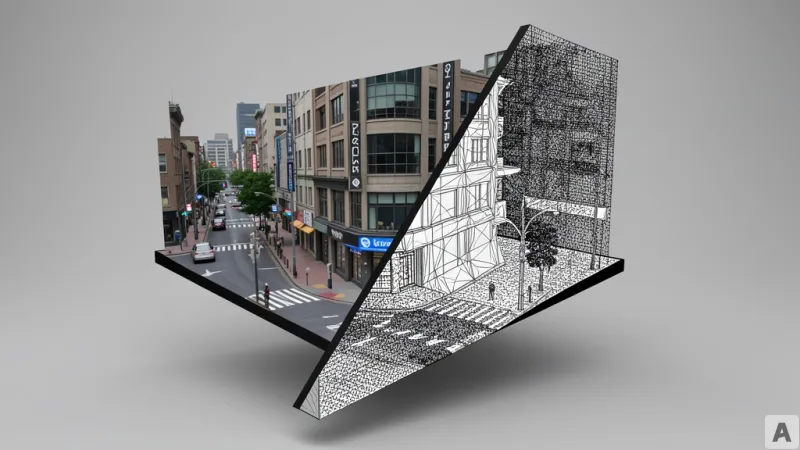

HY-World 2.0 shifts the objective from generating a video to constructing a multimodal world model framework. Unlike previous iterations, this system accepts a wide array of inputs, including raw text, single images, multi-view images, and existing video files, to synthesize a coherent 3D environment. The technical execution relies on a rigorous four-stage pipeline designed to move from a conceptual image to a physical asset. The process begins with HY-Pano 2.0, which generates a comprehensive panoramic image to establish the global background and environmental context. Once the horizon is set, WorldNav takes over to plan the specific trajectories and movement paths the user or agent will follow through the space.

To transform these paths into a tangible environment, WorldStereo 2.0 expands the spatial boundaries, adding depth and volume to the initial panorama. The final stage is handled by WorldMirror 2.0 and 3D Gaussian Splatting (3DGS), a technique that represents 3D scenes using a cloud of points to achieve high-fidelity visuals. WorldMirror 2.0 is engineered as an integrated feed-forward model, meaning it can predict depth, surface normals, camera parameters, point clouds, and 3DGS attributes simultaneously through a single forward pass. This efficiency significantly reduces the computational overhead typically associated with 3D reconstruction. To foster community adoption, the inference code and model weights for WorldMirror 2.0 are already available as open-source on HuggingFace, with the remaining pipeline components scheduled for sequential release.

From Passive Pixels to Interactive Assets

To understand the significance of this shift, one must look at the limitations of existing world models like Genie 3, Cosmos, or the earlier HY-World 1.5. These models operate on a pixel-based generation logic. They produce a video that feels like a cinematic experience, but the experience ends the moment the playback stops. Because the output is a video file, the objects within it have no independent existence; you cannot select a chair in a generated room and move it two feet to the left, nor can you rotate the perspective to see what lies behind a wall.

HY-World 2.0 breaks this ceiling by generating actual 3D assets, specifically meshes and 3DGS data. This transition changes the output from a recording to a resource. Because the results are true 3D assets, they can be imported directly into professional game engines and simulation tools such as Blender, Unity, Unreal Engine, and Isaac Sim. The result is a playable game map or a navigable architectural model rather than a movie clip. In terms of raw performance, HY-World 2.0 matches the output quality of closed-source models like Marble and currently stands as the state-of-the-art among open-source alternatives. This removes the necessity for expensive, time-consuming 3D scanning hardware, allowing developers to build complex virtual reality environments or reinforcement learning spaces for robotics using nothing more than a few photographs or a text prompt.

This evolution marks the transition from AI that mimics sight to AI that constructs reality.