The current AI gold rush is hitting a wall of unsustainable compute costs. As enterprises scale their large language model deployments, the financial burden of centralized cloud infrastructure is becoming a primary bottleneck for innovation. While the industry has focused on building larger data centers, a new paradigm is emerging that looks not toward the cloud, but toward the millions of idle Mac computers sitting in home offices and studios worldwide. Darkbloom is pioneering this shift by transforming dormant Apple Silicon hardware into a global, decentralized inference network that slashes operational costs by up to 70 percent.

Dismantling the Compute Monopoly

To understand why Darkbloom is a disruptive force, one must first examine the current AI supply chain. Today, the path from a chip to a user is fraught with margins. Nvidia produces the hardware, cloud giants like AWS or Azure lease that hardware at a premium, and AI service providers add another layer of cost to cover their own overhead and profit. This structure creates a compute tax that inflates the price of every single token generated by an AI model. It is a traditional retail model where the product passes through multiple warehouses and distributors before reaching the consumer.

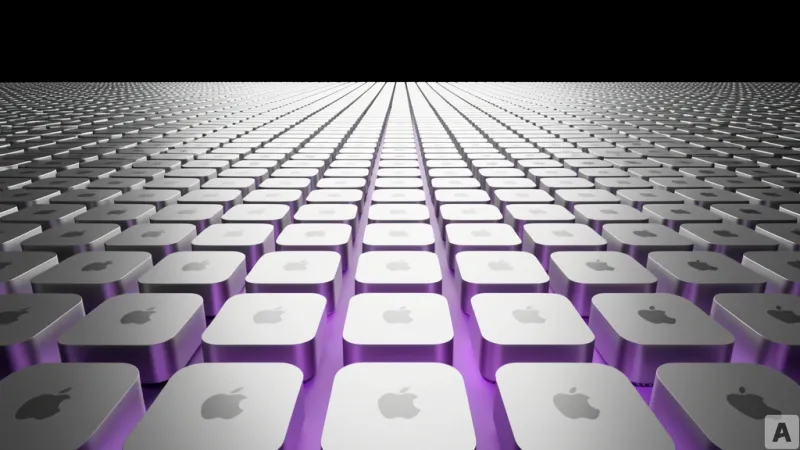

Darkbloom effectively removes these intermediaries by creating a direct-to-consumer marketplace for compute. By leveraging the Unified Memory Architecture of Apple Silicon, Darkbloom allows the network to utilize the GPU and RAM of consumer Macs to handle AI inference. Instead of paying for a slice of a massive, energy-hungry data center, developers can tap into a distributed web of existing hardware. For the provider, an idle Mac becomes a passive income stream, covering electricity costs and generating profit. For the developer, the result is a dramatic reduction in the cost of running models without sacrificing the performance required for production-grade applications.

Privacy in a Trustless Environment

The most significant hurdle for any decentralized network is trust. The prospect of sending sensitive proprietary data to a stranger's home computer is a non-starter for most enterprises. Darkbloom addresses this through a sophisticated security architecture that ensures data privacy even when the hardware is not owned by the service provider. The system relies on a combination of end-to-end encryption and hardware-level isolation.

When a user sends a prompt to the network, the data is encrypted before it ever leaves the source. This encrypted packet is then routed to a participating Mac, but it remains unreadable to the host. The actual computation happens within a secure enclave, a protected area of the processor that is isolated from the rest of the operating system. This means the owner of the Mac cannot peek into the memory to see what the AI is processing or what the output is. The hardware itself acts as a locked vault where the computation occurs in total secrecy.

To further ensure reliability, the network employs a verification system. Because decentralized nodes can theoretically return incorrect or malicious results, Darkbloom implements a proof-of-computation mechanism. This ensures that the answer provided by the distributed Mac is mathematically consistent with the model's weights, providing a layer of trust that rivals the consistency of centralized servers.

The Frictionless Path to Migration

Technical superiority means little if the barrier to adoption is too high. Many companies are hesitant to migrate their AI pipelines because the engineering effort required to switch providers can be immense. Darkbloom solves this by implementing full compatibility with the OpenAI API standard. This is a strategic move that turns the industry's most popular interface into a universal plug.

For a developer, switching from a centralized provider to Darkbloom does not require rewriting thousands of lines of code or restructuring their application logic. It is as simple as changing a base URL in their configuration file. By mirroring the API structure that the industry has already standardized around, Darkbloom allows teams to swap their backend for a more cost-effective alternative in a matter of minutes. This plug-and-play capability removes the friction of migration and allows companies to optimize their spend in real-time based on their current traffic and budget constraints.

This approach transforms the way we think about AI infrastructure. We are moving away from a world of monolithic compute silos and toward a fluid, edge-based ecosystem. By unlocking the latent power of the hardware already present in our homes, Darkbloom is not just lowering prices; it is democratizing access to the computational power required to run the next generation of intelligent applications.

The shift toward decentralized inference represents more than just a cost-saving measure. It is a fundamental reimagining of the internet's physical layer. As models become more efficient and the capability of consumer hardware continues to climb, the reliance on massive, centralized server farms may soon seem as antiquated as the mainframe computers of the 1960s. The future of AI is not just in the cloud, but in the collective power of the devices already surrounding us.