The tension in the modern enterprise AI project usually peaks at the intersection of the AI lead and the Chief Information Security Officer. The developer wants the agent to have real-time access to a proprietary database to provide accurate answers, while the security team views any external connection to the internal network as an unacceptable risk. For months, this stalemate results in manual network configurations, delayed deployments, and AI agents that are functionally blind to the company's most valuable internal data.

The Architecture of Secure Connectivity

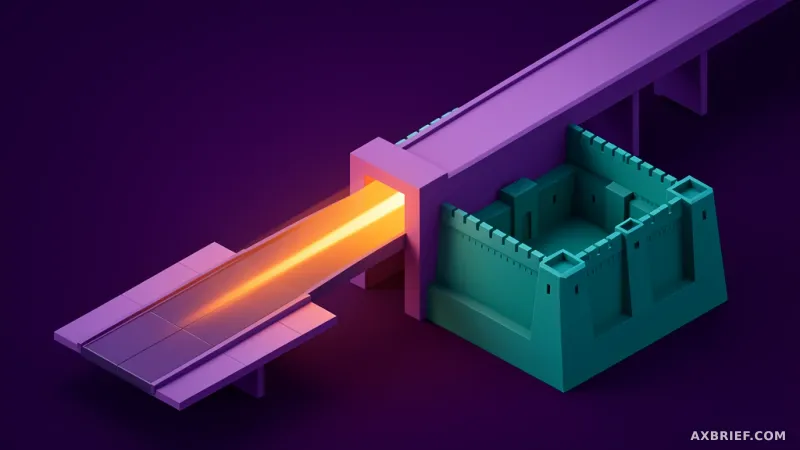

Amazon Bedrock AgentCore Gateway functions as the secure conduit that resolves this conflict by managing how AI agents connect to resources hidden behind an Amazon VPC boundary. The system is specifically designed to deploy MCP servers—which utilize the Model Context Protocol to standardize how AI models communicate with external tools—without exposing those servers to the public internet. The technical foundation of this connectivity relies on the Resource Gateway, which creates an Elastic Network Interface (ENI) within each of the user's VPC subnets to establish a dedicated communication path.

To prevent unrestricted access to the network, the system employs Resource Configuration. This allows administrators to define specific domain names or IP addresses that the Gateway is permitted to access, ensuring the agent only reaches the exact endpoints required for its task. Once the Service Network Resource Association is established, the AgentCore Gateway service gains the necessary authorization to call internal endpoints. This architecture supports a wide range of integrations, including internal Amazon API Gateway endpoints, MCP servers running on Amazon EKS, and closed REST APIs. Furthermore, the Resource VPC can reside within the same AWS account as the Gateway or be hosted in a separate AWS account entirely.

The Strategic Trade-off Between Control and Speed

While the underlying connectivity is consistent, the operational experience diverges based on whether a team chooses the managed or self-managed deployment path. The Managed VPC Resource mode is designed for rapid deployment and minimal overhead. In this configuration, the user provides only the VPC ID, subnet IDs, and security group information, leaving the creation and maintenance of the Resource Gateway to AWS. It operates as a turnkey service where the user maintains read-only visibility into the status of the connection, prioritizing speed of execution over granular configuration.

In contrast, the Self-managed Lattice Resource mode is built for environments where network security policies are rigid and non-negotiable. Here, users manually create the Resource Gateway and configurations via Amazon VPC Lattice before linking them to the AgentCore Gateway. This approach provides total control over the number of IPv4 addresses per ENI, precise subnet placement, and the exact composition of security group rules. This level of granularity is essential for complex corporate environments that utilize AWS Resource Access Manager (RAM) to share resources across multiple AWS accounts. While the managed mode offers the efficiency of a pre-packaged service, the self-managed mode provides the precision of a custom-engineered network.

This choice ultimately boils down to the balance between deployment velocity and administrative sovereignty. Managed mode accelerates the time-to-value for most teams, but self-managed mode ensures that security teams can revoke or modify specific access permissions without relying on automated defaults.

The competitive frontier for AI agents has shifted from the raw intelligence of the model to the robustness of the infrastructure. The winner will not be the model that knows the most, but the one that can most securely and efficiently access the private data locked inside the enterprise firewall.