For years, the promise of edge AI has been trapped in a cycle of narrow specialization. We have seen cameras that can detect a face or sensors that can trigger an alarm, but these systems are essentially sophisticated if-then statements. The industry has long craved a device that can see, reason, and act autonomously without relying on a massive cloud cluster. This week, a demonstration appearing in the Google_Gemma repository suggests that the gap between a static chatbot and a physical agent has finally closed, and it happened on a piece of hardware that fits in the palm of a hand.

The Technical Blueprint for Local Vision-Language-Action

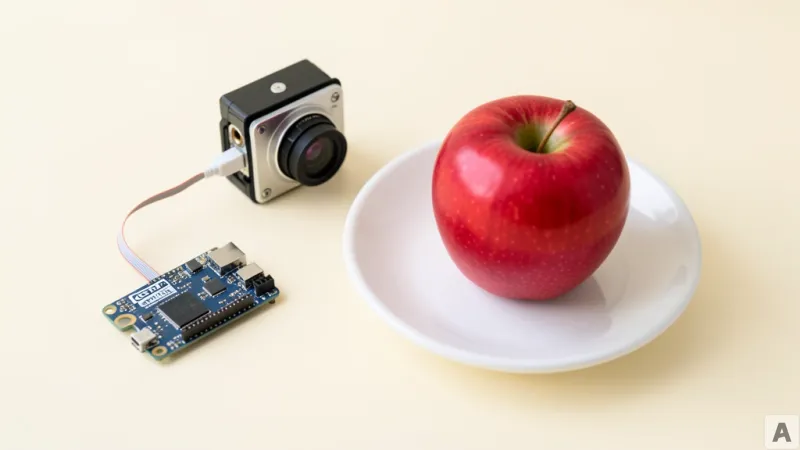

The implementation centers on the NVIDIA Jetson Orin Nano Super, specifically the 8GB memory variant. This is a constrained environment by modern LLM standards, yet it serves as the host for Gemma 4, Google's latest lightweight language model. To make this possible, the system leverages llama.cpp, a library designed to run LLMs with high efficiency on consumer-grade hardware. The deployment utilizes a quantized version of the model, specifically the Q4_K_M precision, which strikes a balance between cognitive performance and memory footprint. For users facing extreme memory pressure, the documentation suggests dropping down to Q3 quantization to maintain stability.

Setting up this environment requires a specific sequence of system dependencies to ensure the hardware can communicate with the model. The process begins with the installation of essential build tools and libraries:

sudo apt-get update

sudo apt-get install -y build-essential cmake git python3-pip python3-dev libasound2-devBecause 8GB of RAM is a tight ceiling for a vision-capable model, the risk of Out-of-Memory (OOM) crashes is high. The solution is the implementation of a dedicated swap file to provide a safety net for the system memory:

sudo fallocate -l 8G /swapfile

sudo chmod 600 /swapfile

sudo mkswap /swapfile

sudo swapon /swapfileOnce the environment is stabilized, the model is served via llama-server. A critical component of this setup is the mmproj (multimodal projector) file, which allows the language model to interpret visual data. The command uses the -ngl 99 flag to offload all model layers to the GPU, maximizing the Orin Nano's hardware acceleration, while the --jinja flag enables the necessary templating for tool calling:

./llama-server -m gemma-4-4b-it-q4_k_m.gguf -mproj mmproj-gemma-4.bin -ngl 99 --jinjaTo complete the loop from perception to interaction, the system integrates Parakeet STT for speech-to-text and Kokoro TTS for text-to-speech. These models are pulled automatically from Hugging Face when the main Python controller is launched:

python3 Gemma4_vla.pyFor developers who prefer to bypass the manual build process, the Jetson AI Lab provides a pre-configured Docker image that encapsulates the entire llama.cpp environment:

bash

docker run -it --rm --runtime nvidia --network host -v ~/.cache/huggingface:/root/.cache/huggingface jetson-ai-lab/llama-cpp:latest -m gemma-4-4b-it-q4_k_m.gguf -hf

The Shift from Hardcoded Triggers to Autonomous Agency

On the surface, a robot taking a photo and describing it seems like a standard multimodal task. However, the technical distinction here lies in the difference between image captioning and a Vision-Language-Action (VLA) workflow. Most existing edge AI demos rely on hardcoded logic: if the user says the word photo, the system triggers the camera function. This is not intelligence; it is a shortcut.

Gemma 4 VLA operates on a fundamentally different principle. By utilizing the native tool-calling capabilities enabled by the --jinja flag, the model is not told when to use the camera. Instead, it decides. When a user asks what is currently in front of the device, the model analyzes the intent of the query and realizes it lacks the necessary visual context to provide an accurate answer. It then autonomously invokes the take_photo tool as a means to acquire the missing information.

This transition transforms the model from a passive responder into an active agent. The camera is no longer a feature the user activates; it is a tool the AI employs to solve a problem. The tension here is between the limited compute of the 8GB Orin Nano and the complex reasoning required for autonomous tool selection. The fact that this loop—reasoning, tool invocation, visual processing, and verbal response—happens in real-time on the edge proves that the bottleneck for physical AI is no longer raw power, but rather the efficiency of the model's decision-making architecture.

By decoupling the action from a specific keyword and attaching it to a cognitive need, the system demonstrates a primitive form of environmental awareness. The model is not merely describing a static image; it is interacting with the physical world to satisfy an information gap. This shift suggests that the future of edge computing is not about running larger models, but about giving smaller models the agency to use the hardware they reside on.

AI is rapidly evolving from a digital entity confined to a screen into a physical presence capable of observing and interpreting the world in real time.