This week, the GitHub trending page lit up with weight files from an unexpected source: Kimi K2.6, the latest model from Chinese AI lab Moonshot AI. Developers are now racing to test whether a 1-trillion-parameter open-source model can actually run on consumer hardware — and early benchmarks suggest it might outperform some of the most expensive closed models on the market.

The Specs and the Numbers

Moonshot AI has released Kimi K2.6 under a Modified MIT license, with weights available on Hugging Face. The model uses a Mixture-of-Experts (MoE) architecture: 1 trillion total parameters, but only 32 billion activated per token. Out of 384 experts, 8 are selected per token, plus one shared expert that is always active. The architecture includes 61 layers (one dense layer), an attention hidden dimension of 7,168, an MoE hidden dimension of 2,048, and 64 attention heads.

Kimi K2.6 is a native multimodal model. It processes images and video through MoonViT, a 400-million-parameter vision encoder. The attention mechanism uses Multi-head Latent Attention (MLA), the activation function is SwiGLU, the vocabulary size is 160,000 tokens, and the context length is 256,000 tokens. Deployment is recommended via vLLM, SGLang, or KTransformers. Since K2.6 shares the same architecture as Kimi K2.5, existing configurations can be reused. The required Transformers version is 4.57.1 or higher but below 5.0.0.

bash

Check transformers version (>=4.57.1, <5.0.0)

pip install "transformers>=4.57.1,<5.0.0"

Install vLLM, SGLang, or KTransformers

vLLM example

pip install vllm

Download weights from Hugging Face

https://huggingface.co/moonshotai/Kimi-K2.6

On SWE-Bench Pro, Kimi K2.6 scored 58.6. For comparison: GPT-5.4 (xhigh) scored 57.7, Claude Opus 4.6 (max effort) scored 53.4, Gemini 3.1 Pro (thinking high) scored 54.2, and Kimi K2.5 scored 50.7. On SWE-Bench Verified, K2.6 reached 80.2, placing it among the top-tier models.

On Terminal-Bench 2.0 (using the Terminus-2 agent framework), K2.6 scored 66.7. GPT-5.4 and Claude Opus 4.6 scored 65.4 each, while Gemini 3.1 Pro scored 68.5. On LiveCodeBench (v6), K2.6 hit 89.6, beating Claude Opus 4.6's 88.8. On Humanity's Last Exam (HLE-Full, with tool use), K2.6 scored 54.0, leading GPT-5.4 (52.1), Claude Opus 4.6 (53.0), and Gemini 3.1 Pro (51.4).

Two Engineering Case Studies

Moonshot AI published two detailed engineering walkthroughs. In the first, Kimi K2.6 downloaded and deployed a Qwen3.5-0.8B model locally on a Mac, then implemented and optimized model inference in Zig — a highly specialized systems programming language. Over 4,000+ tool calls, 12 hours of continuous execution, and 14 iterations, the model improved throughput from roughly 15 tokens per second to approximately 193 tokens per second. The final speed was about 20% faster than LM Studio.

In the second case, Kimi K2.6 autonomously redesigned exchange-core, an eight-year-old open-source financial matching engine. Over 13 hours of execution, the model iterated through 12 optimization strategies, modified over 4,000 lines of code across 1,000+ tool calls. It analyzed CPU and allocation flame graphs to identify bottlenecks, then restructured the core thread layout from 4ME+2RE to 2ME+1RE. Median throughput improved by 185% (from 0.43 to 1.24 MT/s), and peak throughput improved by 133% (from 1.23 to 2.86 MT/s).

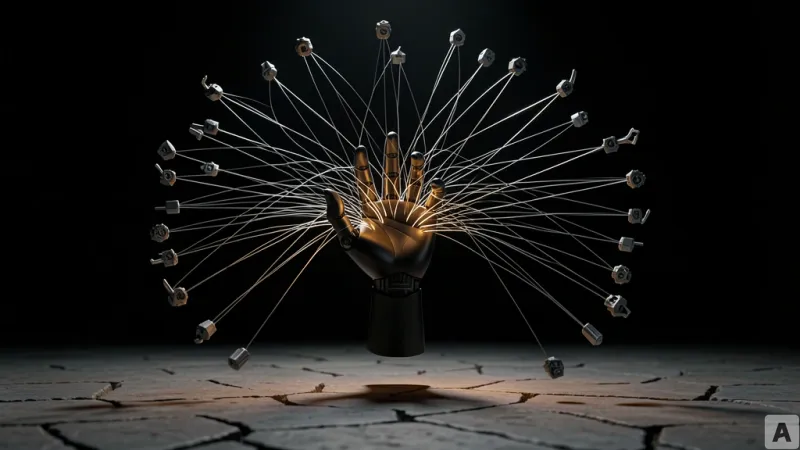

The Agent Swarm: 300 Sub-Agents in Parallel

The most notable feature of Kimi K2.6 is its Agent Swarm — a system that deploys multiple dedicated agents in parallel to split complex tasks. The swarm supports up to 300 sub-agents running 4,000 cooperative steps simultaneously. This is a significant expansion from K2.5, which handled 100 agents and 1,500 steps. The swarm dynamically partitions work into web search, deep research, large-scale document analysis, long-form writing, and multi-format content generation, then integrates outputs into documents, websites, slides, and spreadsheets.

The swarm also introduces a Skills feature. PDFs, spreadsheets, slides, and Word documents can be converted into reusable skills that preserve structural and stylistic information, allowing the model to reproduce the same quality and format in future tasks. Moonshot AI shared two demo cases: one where 100 sub-agents transformed a single resume into 100 tailored resumes for 100 relevant job positions in California, and another where the swarm identified 30 retail stores in Los Angeles without websites using Google Maps.

What This Actually Means

For developers, the headline number is 58.6 on SWE-Bench Pro — higher than GPT-5.4. But the real signal is the 12-hour continuous run where K2.6 built a Zig inference engine from scratch. That's not marketing language; it's a reproducible engineering artifact. The 300-agent swarm architecture moves beyond the single-agent paradigm that most open-source models still rely on.

This is work that used to require a human sitting at the keyboard for days. Now a model spends 13 hours rewriting 4,000 lines of code and finds a 185% performance improvement on its own. By releasing K2.6 as open source, Moonshot AI has reset the baseline for what an agent workload can look like — and made it available for anyone to run.