For the independent AI researcher or the lean startup, the dream of running a high-resolution world model has long been gated by a brutal financial reality. Until recently, simulating a believable virtual environment required renting massive GPU clusters, where the hourly burn rate often outpaced the speed of iteration. The community has been stuck in a cycle of compromising resolution or shortening clip lengths just to keep the compute costs manageable. This week, however, the conversation shifted as the NVIDIA SANA-WM repository began climbing the GitHub trends, signaling a potential end to the era of cluster-dependency for high-fidelity simulation.

The 2.6 Billion Parameter Architecture for Local Inference

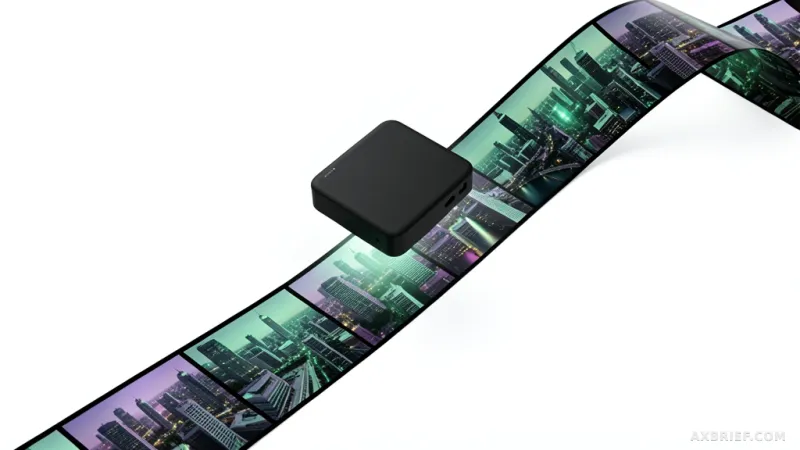

NVIDIA has introduced SANA-WM, a world model designed to simulate virtual environments with a focus on extreme efficiency. At its core, the model is a Diffusion Transformer with 2.6 billion parameters, a size specifically tuned to balance generative power with hardware accessibility. The primary output is a 720p resolution video reaching up to one minute in length, featuring full 6-DoF (six degrees of freedom) camera control. This allows developers to manipulate the perspective and movement within the generated scene with mathematical precision, rather than relying on the unpredictable nature of text-to-video prompts.

To accommodate different deployment needs, NVIDIA provides three distinct inference variants. The first is a bidirectional generator designed for high-quality offline synthesis, where the model can look both forward and backward across the timeline to ensure maximum coherence. The second is a chunk-causal autoregressive generator, which handles sequential expansion for longer durations. The third is a distilled autoregressive generator optimized for rapid deployment.

This distilled variant represents the most significant leap for local hardware. By leveraging NVFP4, NVIDIA's 4-bit floating-point quantization technology, the model can run on a single RTX 5090 GPU. In practical benchmarks, this setup generates a 60-second 720p clip in just 34 seconds. This shift moves world model inference from the realm of data centers to the professional workstation. The complete implementation and official code are available via the NVlabs/Sana repository.

Solving the Memory Bottleneck and Temporal Drift

Achieving this level of efficiency required NVIDIA to solve a fundamental conflict in attention mechanisms. Traditional softmax attention, the gold standard for most transformers, suffers from a quadratic increase in memory and computation as the sequence length grows. For a one-minute video, this becomes a hard wall that crashes most consumer GPUs. While previous iterations like SANA-Video attempted to use cumulative ReLU-based linear attention to keep memory usage constant, they introduced a new problem: temporal drift. Because linear attention assigns equal weight to past frames, the visual consistency of the video begins to warp and degrade as the clip progresses, leading to a loss of structural integrity.

SANA-WM resolves this by replacing the majority of its attention blocks with Gated DeltaNet (GDN). Unlike standard attention, GDN utilizes a decay gate that systematically lowers the weight of older frames and a delta rule that updates the internal state based only on prediction errors. This ensures that the model maintains a constant state size regardless of the video length, effectively eliminating the memory explosion while preventing the drift associated with linear attention. To ensure the model does not lose its long-term memory, NVIDIA strategically embedded softmax attention into five specific layers—layers 3, 7, 11, 15, and 19—out of the 20 total transformer blocks. This hybrid architecture allows the model to maintain global coherence without sacrificing the speed of the GDN blocks.

Precision in camera movement is handled through a dual-branch structure that combines UCPE (Camera Pose Encoding) with Plücker mixing, a method of representing geometric lines in 3D space. This ensures that when a user requests a specific 6-DoF movement, the pixels shift in a way that is mathematically consistent with real-world physics. To polish the final output, NVIDIA implemented a two-stage refinement process. The first stage generates the base structure, and the second stage employs a refiner based on the 17-billion parameter LTX-2 model. This refiner uses a LoRA (Low-Rank Adaptation) adapter with a rank of 384, requiring only three Euler denoising steps to remove visual artifacts and stabilize the image.

The training pipeline was equally optimized for efficiency. NVIDIA replaced the backends of the VIPE camera pose annotation tool with Pi3X for consistent depth estimation and MoGe-2 for precise distance measurement, resulting in a dataset of 212,975 meticulously annotated pose clips. For compression, the team utilized LTX2-VAE, which is twice as small as ST-DC-AE and eight times smaller than Wan2.1-VAE, directly accelerating the inference speed. During the training phase on 64 H100 GPUs, the team deployed custom Triton kernels to optimize GPU memory access, boosting overall computational efficiency by 1.5 to 2 times.

High-resolution virtual worlds are no longer the exclusive domain of those with unlimited compute budgets.