Infrastructure engineers have spent the last two years staring at procurement dashboards, waiting for Nvidia H100 clusters to arrive from a backlog that feels permanent. The industry has accepted a singular truth: if you want to scale AI, you pay the Nvidia tax and wait in line. But a quiet shift is happening in the server racks of the world's largest labs, where the goal is no longer just acquiring more compute, but finding a way to make inference faster and cheaper than a general-purpose GPU allows.

The Financial Path to a $23 Billion Valuation

Cerebras Systems, the designer of high-speed hardware tailored for AI training and inference, has officially filed for its initial public offering. The company enters the public market with a valuation of $23 billion, a figure solidified following a $1 billion Series H funding round in February and a previous $1.1 billion Series G investment. This path to the public market was not linear. A previous attempt to go public in 2024 was withdrawn after the U.S. federal government launched a review into investments from G42, the Abu Dhabi-based AI investment and development firm, creating a geopolitical hurdle that delayed the company's timeline.

The financial trajectory of Cerebras reveals a company scaling rapidly while navigating the volatile costs of hyper-growth. For 2025, the company reported revenue of $510 million. While it posted a net profit of $237.8 million, the non-GAAP figures provide a more nuanced view of the operation, showing a net loss of $75.7 million when one-time items are stripped away. Despite these accounting fluctuations, the company's strategic positioning is aggressive. Cerebras has secured a partnership with Amazon Web Services to integrate its proprietary chips directly into AWS data centers, expanding its reach to the cloud's largest customer base. More strikingly, the company has reportedly inked a deal with OpenAI valued at over $10 billion. The IPO is currently slated for mid-May, though the exact amount of capital to be raised remains undisclosed.

The End of the General-Purpose GPU Monopoly

The sheer scale of the OpenAI contract suggests a fundamental pivot in how the world's most advanced AI models are deployed. For years, the AI industry has relied on the general-purpose nature of GPUs, which are versatile enough to handle everything from graphics rendering to complex LLM training. However, as models grow in parameter count, the inference bottleneck becomes the primary obstacle. Latency and power consumption during the output phase are now the critical metrics for user experience, and general-purpose hardware is hitting a wall of diminishing returns.

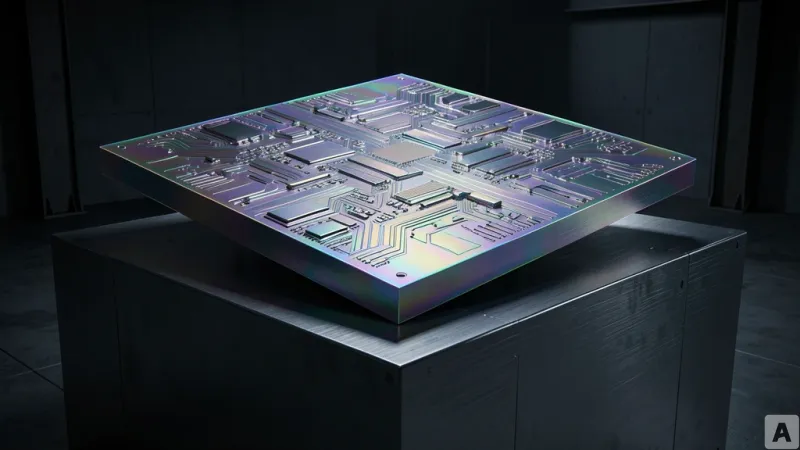

Cerebras is betting that the future belongs to dedicated inference hardware. By stripping away the versatility of a GPU and optimizing the silicon specifically for AI tensor operations, they offer a path to speed that Nvidia's current architecture cannot match. When a company like OpenAI commits $10 billion to a non-Nvidia solution, it is an admission that the GPU-only era is ending. This transition forces a new engineering paradigm: teams can no longer simply write code for CUDA and expect it to scale. Instead, they must move toward a hybrid infrastructure, optimizing inference engines specifically for specialized accelerators.

The tension is no longer about who has the most chips, but who has the most efficient path from input to output. Nvidia has long used CUDA as a software moat to lock developers into its ecosystem, but the practical demand for inference efficiency is reaching a breaking point. When the performance gap between a general-purpose chip and a dedicated accelerator becomes wide enough, the cost of rewriting the software stack becomes a secondary concern to the necessity of speed. This shift indicates that the hardware is now driving the software evolution, forcing a rewrite of the inference layer to accommodate specialized silicon.

AI infrastructure is transitioning from a monolithic GPU standard to a curated ensemble of specialized chips optimized for specific workloads.