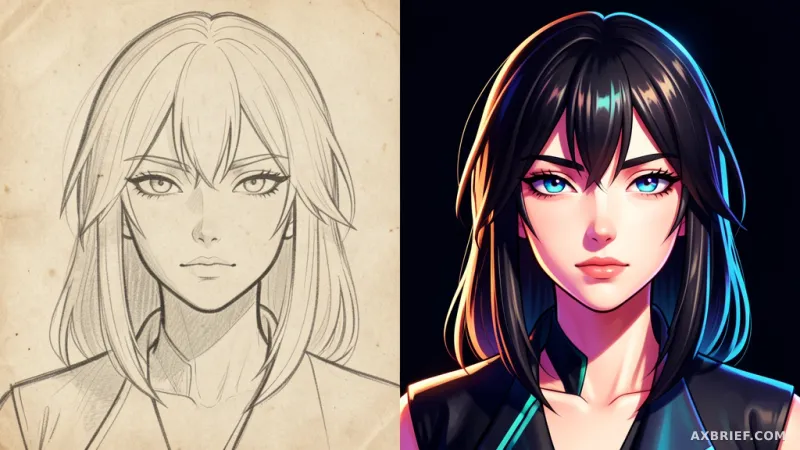

The current trajectory of generative AI feels like an endless arms race of scale. For the past few years, the industry mantra has been bigger is better, with labs pushing parameter counts into the trillions and relying heavily on synthetic data—AI-generated content fed back into the training loop—to bridge the gap in available datasets. But in the niche, high-precision world of subculture and anime art, this approach often results in a sterile, homogenized aesthetic that lacks the sharp edges and intentionality of human illustration. This week, a different philosophy emerged on Hugging Face, challenging the notion that massive scale and synthetic shortcuts are the only paths to high-fidelity imagery.

The Architecture of Pure Data

Developed through a collaboration between CircleStone Labs and Comfy Org, Anima is a text-to-image model that deliberately ignores the trend of bloated architectures. It is built with only 2 billion parameters, a fraction of the size of the industry giants, yet it is designed for a specific, high-impact purpose: the mastery of animation and subculture aesthetics. The model's training foundation is rooted in purity. Its dataset consists of millions of animation-specific images supplemented by approximately 800,000 non-animation art pieces. Crucially, the developers completely eschewed synthetic data. By training exclusively on human-created art, Anima avoids the recursive degradation often found in models that learn from their own outputs, ensuring that the resulting images retain the authentic brushwork and stylistic nuances of real artists.

To deploy Anima, users operate within the ComfyUI environment, a node-based interface that allows for granular control over the generation pipeline. The model requires a specific set of files to be placed in the following directory paths to function correctly:

anima-base-v1.0.safetensors -> ComfyUI/models/diffusion_models

qwen_3_06b_base.safetensors -> ComfyUI/models/text_encoders

qwen_image_vae.safetensors -> ComfyUI/models/vaeThe technical backbone of the model leverages the Qwen ecosystem, utilizing the Qwen-Image VAE for efficient image compression and reconstruction, as well as Qwen-based technology for its text encoders. While the Anima-Base version provides the foundational capability, the release also includes a Turbo LoRA, a low-rank adaptation module designed to increase generation speed and stability without sacrificing the core aesthetic.

The Shift from Scale to Precision

When moving from a general-purpose model like SDXL to Anima, the most immediate realization is that the relationship between the prompt and the pixel has changed. The tension here lies in the difference between a model that knows everything vaguely and a model that knows one thing deeply. Anima does not attempt to be a jack-of-all-trades; instead, it treats the anime style as a precise science. This is most evident in how the model responds to different samplers, where the choice of algorithm fundamentally alters the dimensionality of the art. Using the er_sde sampler produces the classic anime look characterized by flat color palettes and razor-sharp line work. In contrast, the euler_a sampler softens these edges, creating a 2.5D effect that adds a sense of volume and depth to the characters. For those seeking more experimental or creative interpretations, the dpmpp_2m_sde_gpu sampler offers higher variance, though it requires more precise prompting to keep the output stable. To achieve a more painterly, traditional art texture, the beta57 scheduler is recommended, as it emphasizes low-noise timesteps to refine the final image grain.

Prompting in Anima also requires a departure from standard natural language descriptions. The model is optimized for a hybrid approach, blending natural language with Danbooru-style tags. Because the model is so specialized, it requires higher weight values than SDXL to trigger specific effects. For instance, a user wanting a chibi style must use a stronger weight, such as `(chibi:2)`, to ensure the model overrides its default proportions. Furthermore, the model introduces a specific syntax for artist attribution, requiring an @ symbol before the artist's name to properly activate their unique style. This creates a highly structured prompting environment where the user acts more like a curator than a writer.

To achieve optimal results, the developers suggest a specific set of positive and negative prompts to steer the model away from common artifacts. The recommended positive prompt is `masterpiece, best quality, score_7, safe, ` while the negative prompt should be `worst quality, low quality, score_1, score_2, score_3, artist name`. A full implementation of these rules looks like this:

`year 2025, newest, normal quality, score_5, highres, safe, 1girl, oomuro sakurako, yuru yuri, @nnn yryr, smile, brown hair, hat, solo, fur-trimmed gloves, open mouth, long hair, gift box, fang, skirt, red gloves, blunt bangs, gloves, one eye closed, shirt, brown eyes, santa costume, red hat, skin fang, twitter username, white background, holding bag, fur trim, simple background, brown skirt`

This shift in methodology proves that the perceived ceiling of AI quality is not necessarily tied to parameter count, but to the alignment between the dataset and the intended output. By stripping away the noise of a generalist dataset and the artificiality of synthetic data, Anima achieves a level of stylistic fidelity that larger models often miss.

The industry is entering an era where hyper-optimized, domain-specific miniatures will consistently outperform monolithic generalists in specialized creative fields.