Every morning, the same AI agent makes the same mistakes. It browses the web, resolves a GitHub issue, or fills out a form — and then, as if struck by amnesia, it forgets everything it just learned. The next task starts from zero. Hundreds of prior failures offer no signal. The lesson evaporates the moment the session ends.

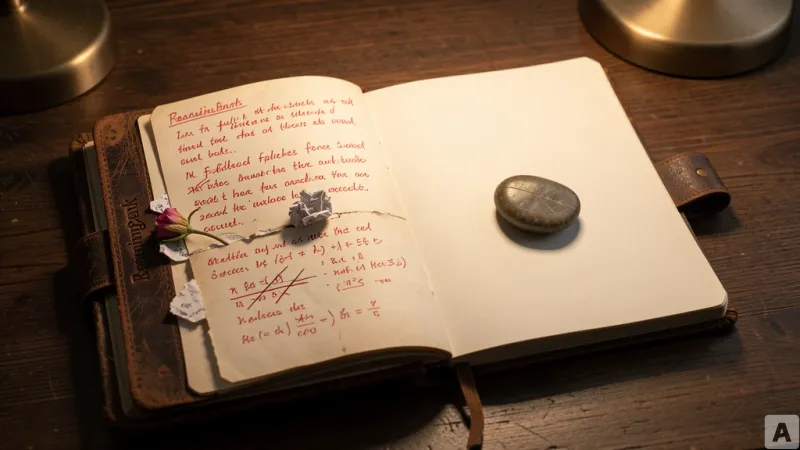

Google Cloud AI, in collaboration with the University of Illinois Urbana-Champaign and Yale University, has released a memory framework called ReasoningBank that addresses this exact problem. Rather than merely logging what an agent did, the system extracts *why* it succeeded or failed, and distills those reasons into reusable, generalized reasoning strategies. The research paper is available on arXiv.

Existing memory systems remembered success and discarded failure

Prior AI agent memory approaches fall into two camps. Trajectory memory, used in systems like Synapse, stores every click, scroll, and query as raw logs. Agent Workflow Memory (AWM) extracts reusable procedures only from successful runs. Both have a critical weakness. Raw trajectories are noisy and long, making them difficult to apply directly to new tasks. AWM, by contrast, only analyzes successful attempts, discarding the rich learning signal hidden in failures.

ReasoningBank operates as a closed-loop memory system with three stages executed after every completed task.

First, the memory retrieval stage. Before starting a new task, the agent performs an embedding-based similarity search to find the single most relevant memory item. The default is k=1. Experiments showed that retrieving more memories actually degraded performance — success rate dropped from 49.7% at k=1 to 44.4% at k=4.

Second, the memory extraction stage. After a task finishes, a Memory Extractor — powered by the same backbone language model as the agent — analyzes the trajectory and produces a structured memory item. Each item has three components: a title, a description, and content. The key design choice is that success and failure trajectories are processed differently. Success provides a validated strategy. Failure provides a counterexample and a preventative lesson.

Third, the memory consolidation stage. The new memory item is appended to the store in JSON format, along with a precomputed embedding for fast cosine similarity search. The loop is complete.

The judge only needs 70% accuracy to be effective

To determine whether a trajectory was a success or failure, the team uses an LLM-as-a-Judge. It takes the user query, the trajectory, and the final page state as input, and returns a binary "success" or "failure" verdict. The judge does not need to be perfect. Experiments showed that even when judge accuracy dropped to 70%, ReasoningBank remained robust.

Memory-aware test-time scaling: using multiple attempts as contrast signals

The team also introduced MaTTS (Memory-aware Test-Time Scaling), which connects to the proven technique of test-time compute scaling in math reasoning and coding tasks. The core idea is simple: instead of generating multiple trajectories for the same task, picking the best answer, and discarding the rest, MaTTS uses all trajectories as contrast signals.

MaTTS operates in two modes. Parallel scaling generates k independent trajectories for the same query, then uses self-contrast to extract higher-quality memory. Sequential scaling iteratively refines a single trajectory through self-improvement, capturing intermediate corrections and insights as memory signals. On WebArena-Shopping at k=5, parallel scaling slightly outperformed sequential scaling. The reason is that sequential approaches saturate quickly once the model reaches a deterministic success or failure, while parallel approaches continue to provide diverse trajectories for contrastive learning.

Consistent gains across three benchmarks

ReasoningBank was tested on WebArena, Mind2Web, and SWE-Bench-Verified. It consistently outperformed baselines across all datasets and all backbone models.

On WebArena with Gemini-2.5-Flash, ReasoningBank improved success rate by 8.3 percentage points over the no-memory baseline. Average interaction steps decreased by up to 1.4 steps compared to no memory, and by up to 1.6 steps compared to other memory baselines. The efficiency gains were most pronounced on successful trajectories — on the shopping subset, steps for successful tasks dropped by 2.1, a 26.9% relative reduction.

On Mind2Web, the largest improvements came in the cross-domain setting, which requires the highest level of strategy transfer. The competing method AWM actually performed worse than the no-memory baseline in this setting. On SWE-Bench-Verified, results varied meaningfully depending on the backbone model.

The practical difference for developers is this: an AI agent that no longer repeats the same mistake, that applies lessons from failure to the next task, and that achieves higher success rates in fewer steps.

AI agents can now not only remember, but learn from failure.