Every morning, the AI community wakes up to a new leaderboard. A developer scrolls through a series of percentages and bar charts, looking for a sign that a new model has finally cracked the code on complex reasoning or coding proficiency. For years, this process was a matter of simple arithmetic: feed a model a set of questions, record the answers, and calculate the accuracy. But a quiet, expensive shift is happening. The industry is moving away from static question-and-answer tests toward agentic evaluations, where AI doesn't just talk but acts. This transition has turned the act of measuring intelligence into a massive financial liability, creating a bottleneck where the cost of proving a model works is beginning to rival the cost of building it.

The Financial Toll of Agentic Benchmarking

The scale of this cost explosion is laid bare in the recent reporting from the Holistic Agent Leaderboard (HAL), a framework designed to measure the actual execution capabilities of AI agents. To get a comprehensive view of performance, the research team conducted 21,730 agent executions across nine different models and nine distinct benchmarks. The price tag for this specific exercise was approximately 40,000 dollars. This is not a one-time setup fee but the operational cost of running the tests. When drilling down into specific benchmarks, the numbers become even more staggering. A single run of GAIA, a benchmark that tests general problem-solving abilities for AI agents, costs 2,829 dollars for the latest models, even when caching costs are excluded.

The resource drain extends beyond API credits into raw compute power. In the realm of scientific machine learning, the benchmark known as The Well provides a sobering look at hardware requirements. Evaluating a single new architecture requires 960 hours of H100 GPU time. To fully verify the entire benchmark suite, that requirement jumps to 3,840 hours. For most research labs, this means that evaluation is no longer a peripheral task performed at the end of a training cycle. It has become a primary line item in the budget, transforming the validation phase into a high-stakes financial gamble.

The Collapse of the Compression Strategy

To understand why costs have spiked, one must look at the fundamental difference between static and agentic evaluation. In the early days of the LLM boom, benchmarks were essentially multiple-choice tests. In 2022, the Center for Research on Foundation Models (CRFM) at Stanford University released HELM, a comprehensive framework that focused largely on static problem solving. During that era, researchers discovered a convenient shortcut: they could reduce computing resources by 100 to 200 times and still see almost no change in the relative ranking of models. This insight led to the creation of tools like Flash-HELM and tinyBenchmarks, which compressed datasets by over 90% while maintaining high accuracy. If a model performed well on a tiny, representative slice of the data, it was assumed to perform well on the whole.

That logic fails completely when the AI is an agent. An agent does not simply provide a tokenized answer; it interacts with a browser, executes code, and navigates a multi-step trajectory to reach a goal. This introduces a layer of complexity known as the scaffold—the supporting code and environment that allows the agent to function. Because agentic performance is a product of the model, the scaffold, and the token budget, the system becomes hyper-sensitive to minor configuration changes. A slight tweak in the prompt or a change in the tool-calling logic can cause costs to swing by a factor of ten. The stochastic nature of web environments and tool interactions means that the 90% data reduction used in static benchmarks is no longer viable. You cannot compress a trajectory; you must run the full path to know if the agent actually reached the destination.

This volatility leads to a frustrating reality for developers: spending more money does not guarantee better insights. Data from the Online Mind2Web dataset, which evaluates web-browsing agents, reveals a brutal efficiency curve. One model configuration cost 1,577 dollars to evaluate, while another cost only 171 dollars. The difference in accuracy between the two was a negligible 2 percentage points. This suggests that the industry is hitting a wall of diminishing returns. According to CLEAR, a study analyzing the cost-efficiency of agent performance, the settings required to achieve peak accuracy are often 4 to 10 times more expensive than cost-effective alternatives that yield nearly identical results.

Efforts to mitigate these costs have seen limited success. Some researchers, such as Ndzomga, have implemented medium-difficulty filtering—a method that only tests tasks with a pass rate between 30% and 70%. While this can reduce costs by 2 to 3 times, it is a far cry from the 100-fold reductions possible in the static era. The inherent nature of agentic evaluation—where one query triggers a cascade of actions—makes the process a monolithic expense that resists simple optimization.

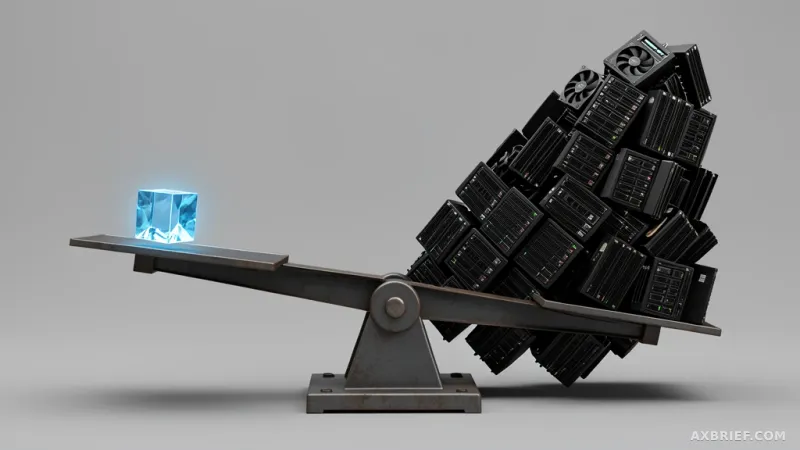

As the cost of measuring AI performance begins to chase the cost of training the models themselves, the ability to evaluate efficiently has become a new form of technical moat. The competitive edge is shifting from who can build the smartest model to who can prove it is smart without bankrupting the company.